For those upgrading multi-server clusters from Cronicle v0.8 to v0.9, you must upgrade all of your servers for them to be able to communicate. In v0.9 we have upgraded to socket.io v4.4, which is incompatible with socket.io v1.7.3 that previous Cronicle versions used. We had to upgrade this dependency due to high severity vulnerabilities. Since this is a breaking API change for them, you cannot run "mixed" server clusters with some servers on Cronicle v0.8 and others on v0.9. They all have to be on v0.8 or they all have to be on v0.9.

So, it is recommended that you first disable the main scheduler on your master server (checkbox in top-right corner of the UI), wait for all jobs to complete, then shut down all servers and upgrade them all together. Then start them all up again, and finally re-enable the scheduler.

Cronicle is a multi-server task scheduler and runner, with a web based front-end UI. It handles both scheduled, repeating and on-demand jobs, targeting any number of worker servers, with real-time stats and live log viewer. It's basically a fancy Cron replacement written in Node.js. You can give it simple shell commands, or write Plugins in virtually any language.

- Single or multi-server setup.

- Automated failover to backup servers.

- Auto-discovery of nearby servers.

- Real-time job status with live log viewer.

- Plugins can be written in any language.

- Schedule events in multiple timezones.

- Optionally queue up long-running events.

- Track CPU and memory usage for each job.

- Historical stats with performance graphs.

- Simple JSON messaging system for Plugins.

- Web hooks for external notification systems.

- Simple REST API for scheduling and running events.

- API Keys for authenticating remote apps.

Table of Contents

- Installation

- Setup

- Configuration

- Basics

- base_app_url

- email_from

- smtp_hostname

- smtp_port

- mail_options

- secret_key

- log_dir

- log_filename

- log_columns

- log_archive_path

- copy_job_logs_to

- queue_dir

- pid_file

- debug_level

- maintenance

- list_row_max

- job_data_expire_days

- child_kill_timeout

- dead_job_timeout

- master_ping_freq

- master_ping_timeout

- udp_broadcast_port

- scheduler_startup_grace

- universal_web_hook

- web_hook_custom_data

- web_hook_custom_opts

- web_hook_text_templates

- ssl_cert_bypass

- job_memory_max

- job_memory_sustain

- job_cpu_max

- job_cpu_sustain

- job_log_max_size

- job_env

- server_comm_use_hostnames

- web_direct_connect

- web_socket_use_hostnames

- socket_io_transports

- Storage Configuration

- Web Server Configuration

- User Configuration

- Email Configuration

- Basics

- Web UI

- Home Tab

- Schedule Tab

- Completed Jobs Tab

- Job Details Tab

- My Account Tab

- Administration Tab

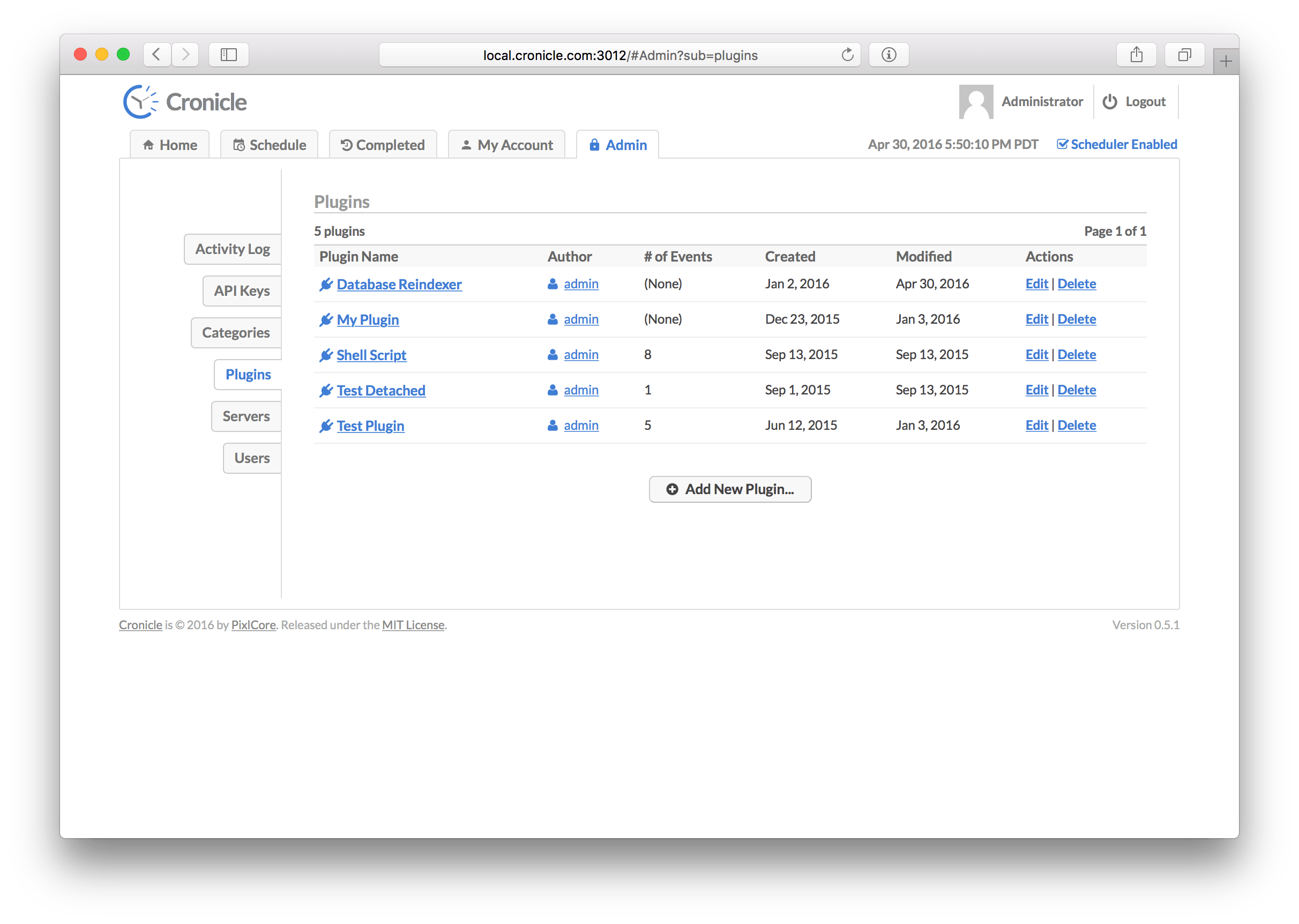

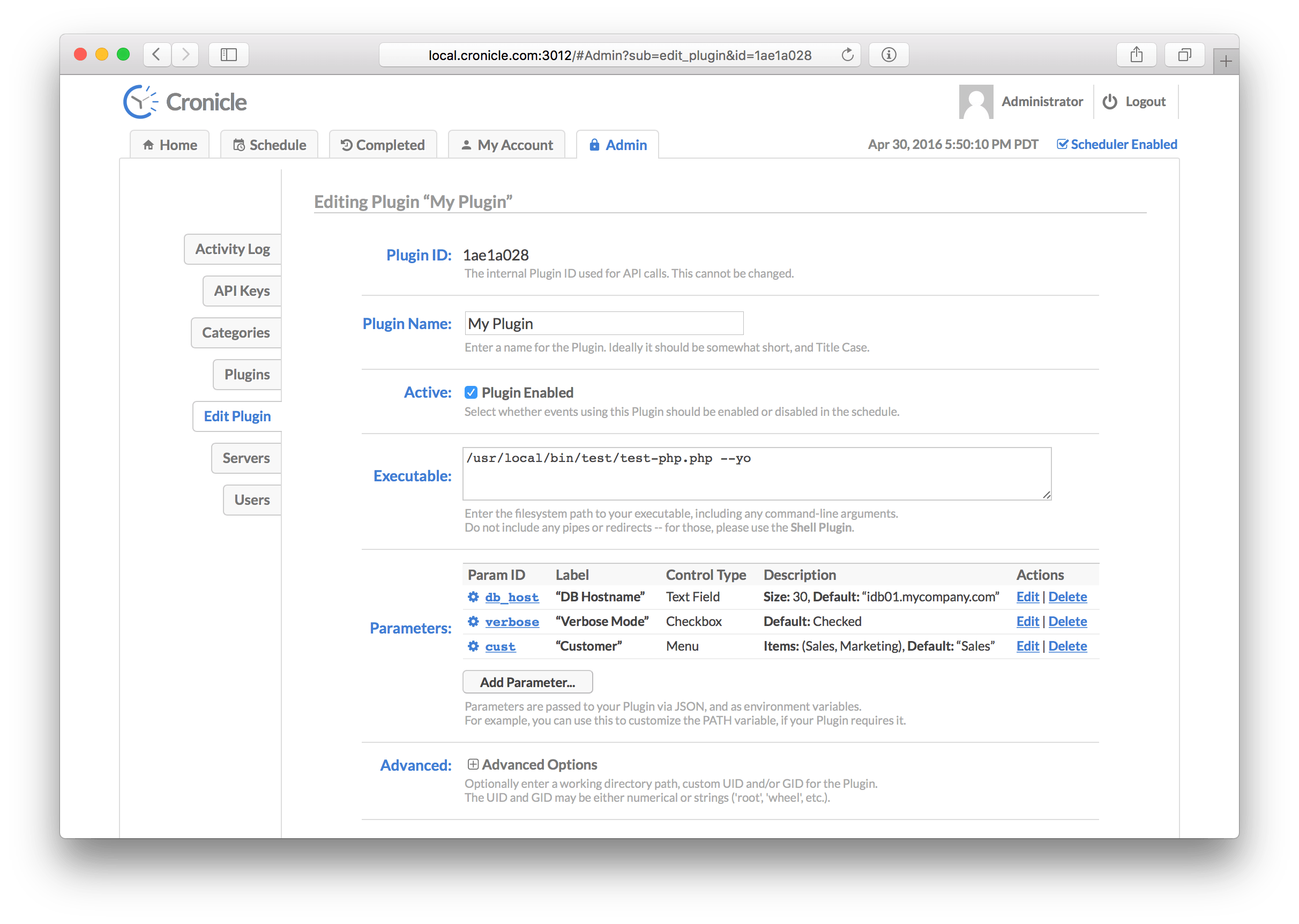

- Plugins

- Command Line

- Inner Workings

- API Reference

- Development

- Companies Using Cronicle

- Colophon

- License

A quick introduction to some common terms used in Cronicle:

| Term | Description |

|---|---|

| Primary Server | The primary server which keeps time and runs the scheduler, assigning jobs to other servers, and/or itself. |

| Backup Server | A worker server which will automatically become primary and take over duties if the current primary dies. |

| Worker Server | A server which sits idle until it is assigned jobs by the primary server. |

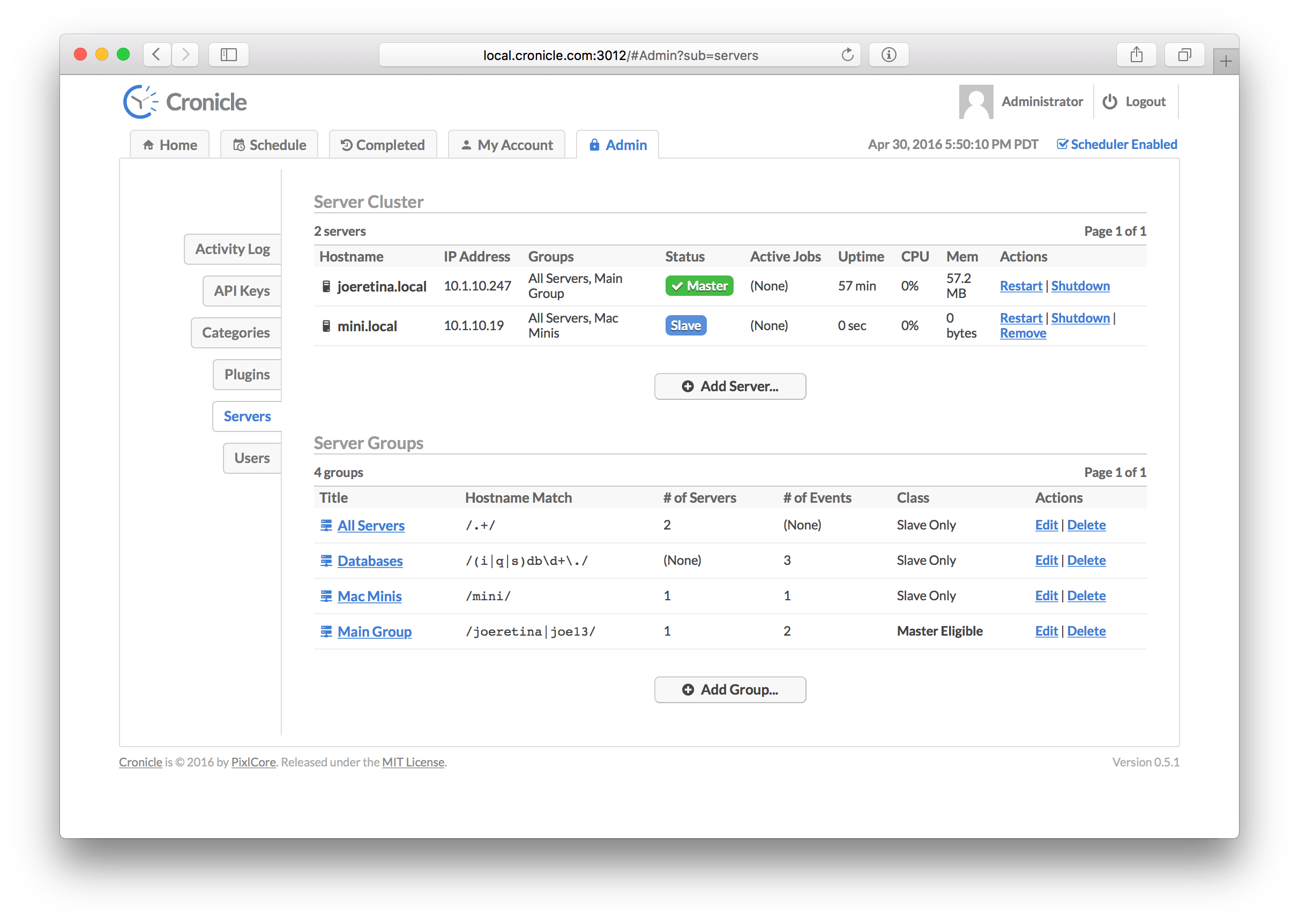

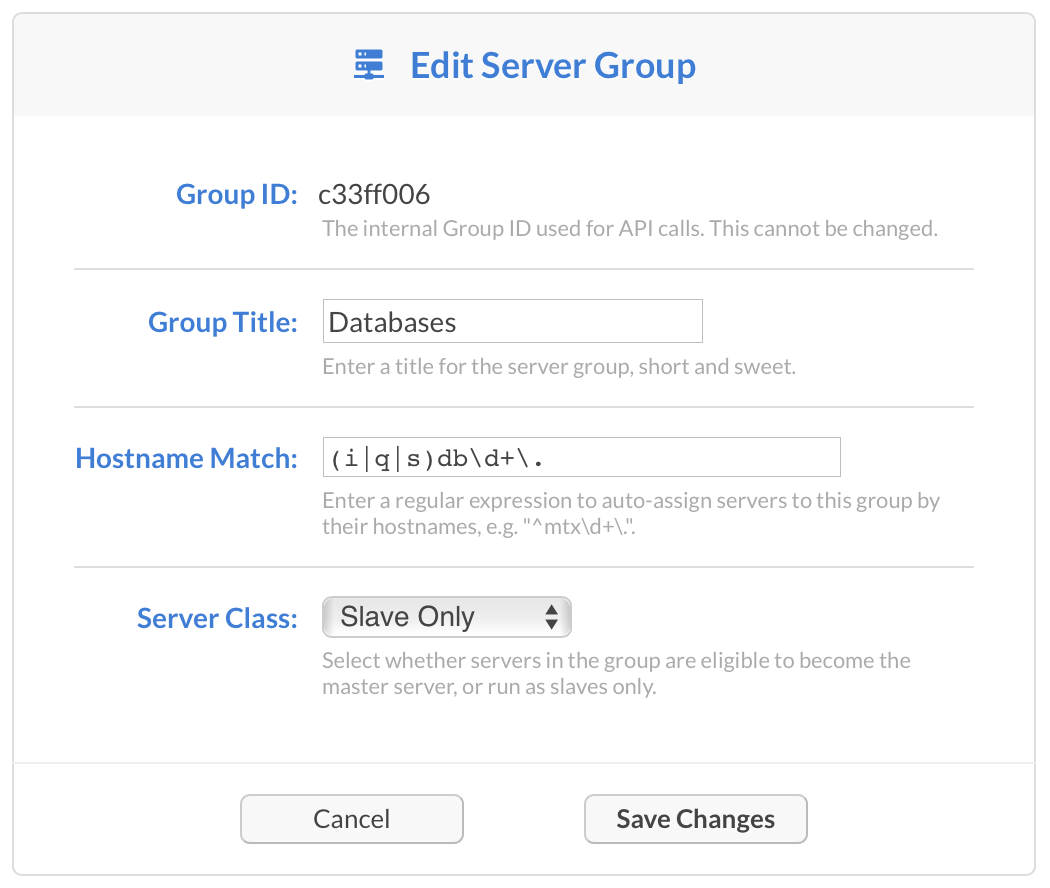

| Server Group | A named group of servers which can be targeted by events, and tagged as "primary eligible", or "worker only". |

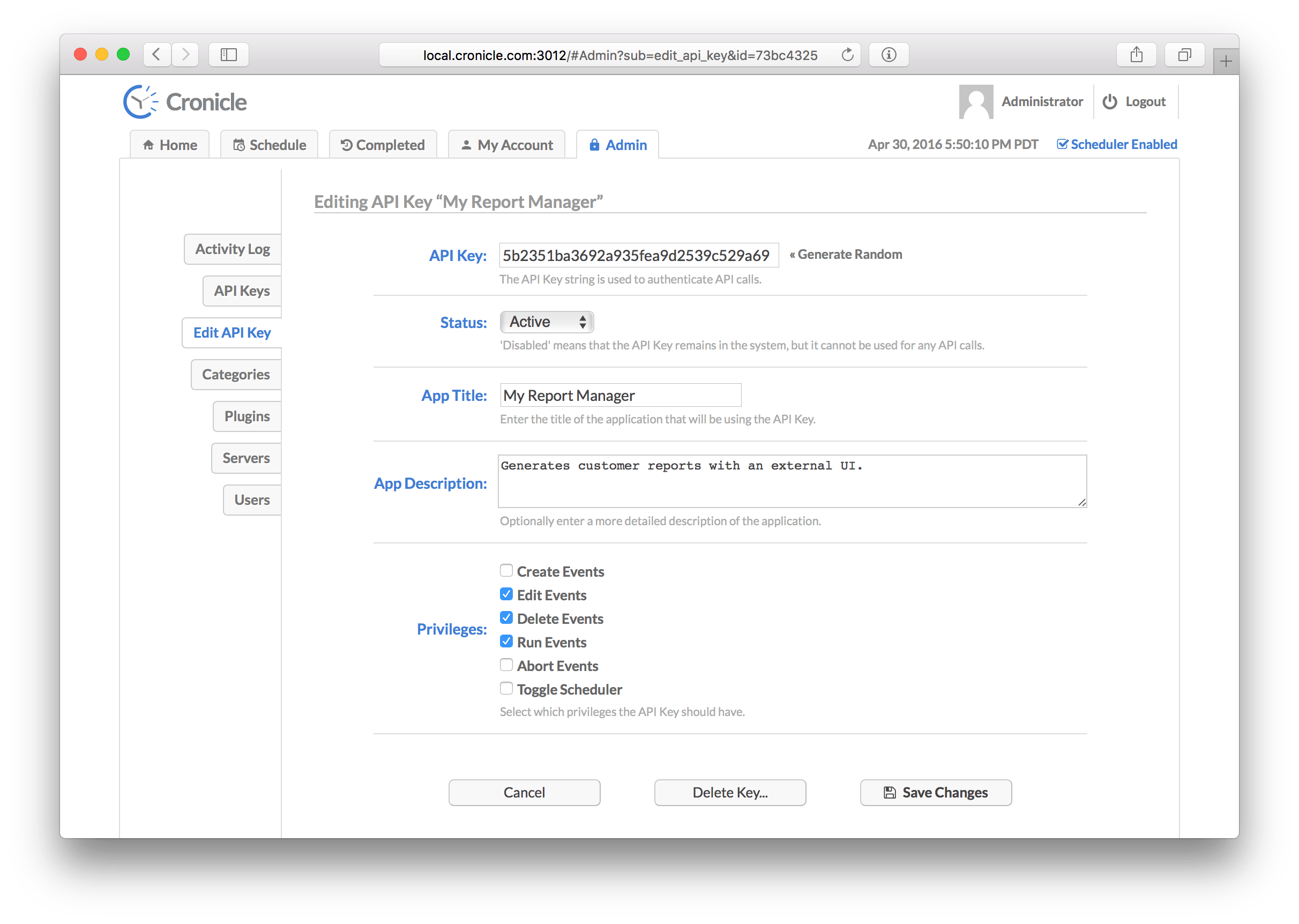

| API Key | A special key that can be used by external apps to send API requests into Cronicle. Remotely trigger jobs, etc. |

| User | A human user account, which has a username and a password. Passwords are salted and hashed with bcrypt. |

| Plugin | Any executable script in any language, which runs a job and reads/writes JSON to communicate with Cronicle. |

| Schedule | The list of events, which are scheduled to run at particular times, on particular servers. |

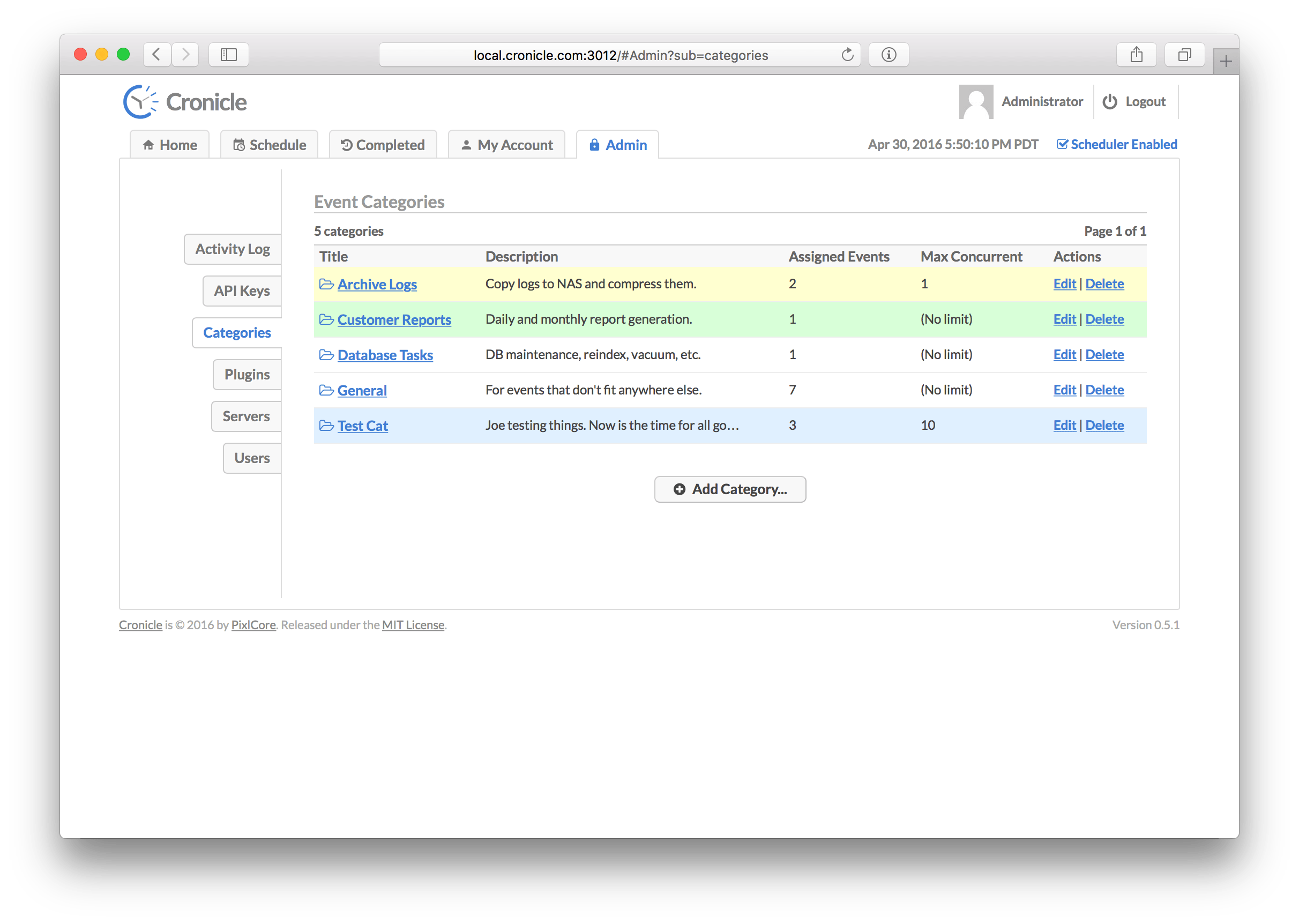

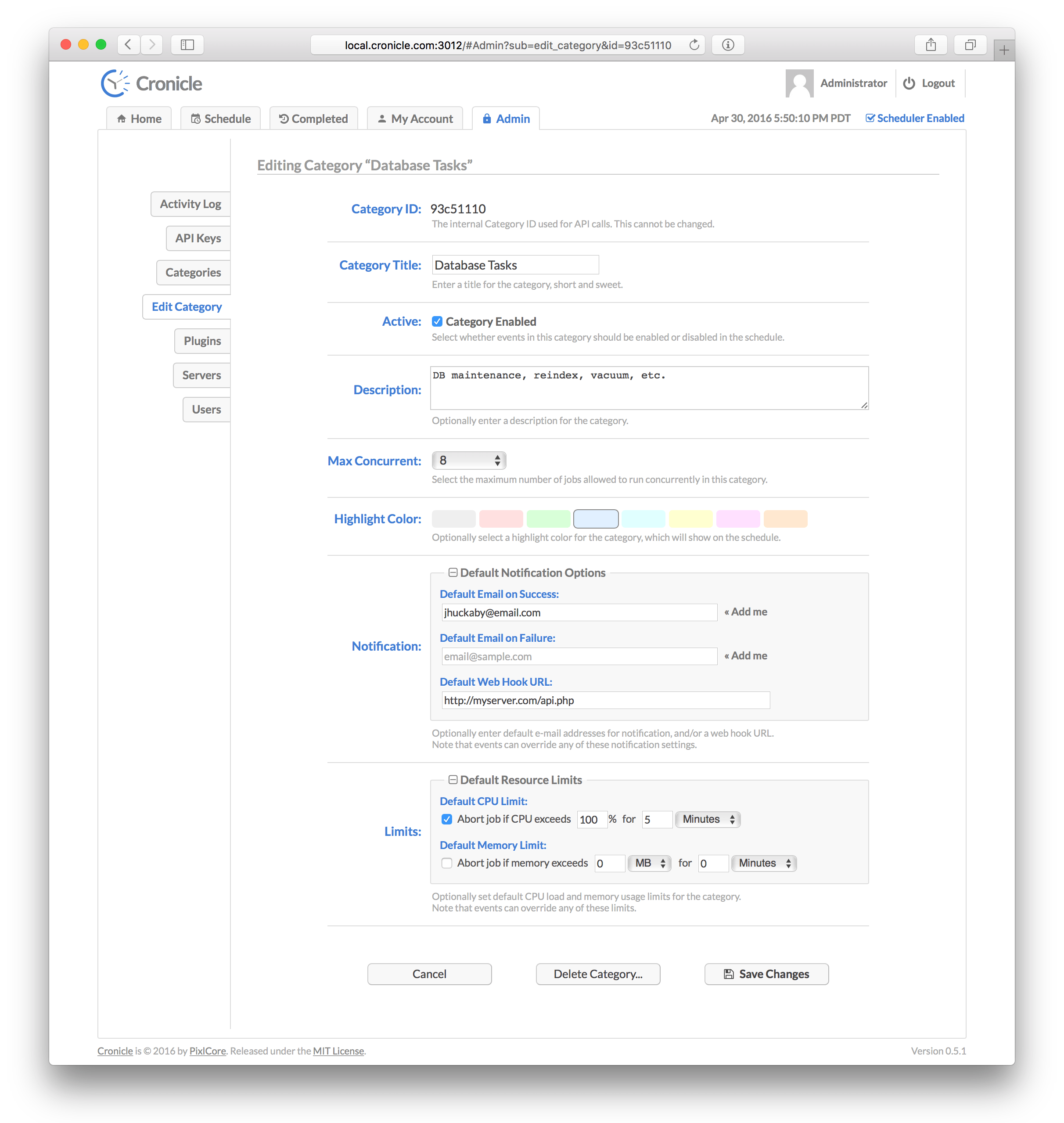

| Category | Events can be assigned to categories which define defaults and optionally a color highlight in the UI. |

| Event | An entry in the schedule, which may run once or many times at any interval. Each event points to a Plugin, and a server or group to run it. |

| Job | A running instance of an event. If an event is set to run hourly, then a new job will be created every hour. |

Please note that Cronicle currently only works on POSIX-compliant operating systems, which basically means Unix/Linux and OS X. You'll also need to have Node.js LTS pre-installed on your server. Please note that we only support the Active LTS versions of Node.js. Cronicle may not work on the "current" release channel. See Node.js Releases for details.

Once you have Node.js LTS installed, type this as root:

curl -s https://raw.githubusercontent.com/jhuckaby/Cronicle/master/bin/install.js | node

This will install the latest stable release of Cronicle and all of its dependencies under: /opt/cronicle/

If you'd rather install it manually (or something went wrong with the auto-installer), here are the raw commands:

mkdir -p /opt/cronicle

cd /opt/cronicle

curl -L https://github.com/jhuckaby/Cronicle/archive/v1.0.0.tar.gz | tar zxvf - --strip-components 1

npm install

node bin/build.js dist

Replace v1.0.0 with the desired Cronicle version from the release list, or master for the head revision (unstable).

If this is your first time installing, please read the Configuration section first. You'll likely want to customize a few configuration parameters in the /opt/cronicle/conf/config.json file before proceeding. At the very least, you should set these properties:

| Key | Description |

|---|---|

base_app_url |

A fully-qualified URL to Cronicle on your server, including the http_port if non-standard. This is used in e-mails to create self-referencing URLs. |

email_from |

The e-mail address to use as the "From" address when sending out notifications. |

smtp_hostname |

The hostname of your SMTP server, for sending mail. This can be 127.0.0.1 or localhost if you have sendmail running locally. |

secret_key |

For multi-server setups (see below) all your servers must share the same secret key. Any randomly generated string is fine. |

job_memory_max |

The default maximum memory limit for each job (can also be customized per event and per category). |

http_port |

The web server port number for the user interface. Defaults to 3012. |

Now then, the only other decision you have to make is what to use as a storage back-end. Cronicle can use local disk (easiest setup), Couchbase or Amazon S3. For single server installations, or even single primary with multiple workers, local disk is probably just fine, and this is the default setting. But if you want to run a true multi-server cluster with automatic primary failover, please see Multi-Server Cluster for details.

With that out of the way, run the following script to initialize the storage system. You only need to do this once, and only on the primary server. Do not run this on any worker servers:

/opt/cronicle/bin/control.sh setup

Among other things, this creates an administrator user account you can use to login right away. The username is admin and the password is admin. It is recommended you change the password as soon as possible, for security purposes (or just create your own administrator account and delete admin).

At this point you should be able to start the service and access the web UI. Enter this command:

/opt/cronicle/bin/control.sh start

Give it a minute to decide to become primary, then send your browser to the server on the correct port:

http://YOUR_SERVER_HOSTNAME:3012/

You only need to include the port number in the URL if you are using a non-standard HTTP port (see Web Server Configuration).

See the Web UI section below for instructions on using the Cronicle web interface.

For a single server installation, there is nothing more you need to do. After installing the package, running the bin/control.sh setup script and starting the service, Cronicle should be 100% ready to go. You can always add more servers later (see below).

The easiest multi-server Cronicle setup is a single "primary" server with one or more workers. This means that one server is the scheduler, so it keeps track of time, and assigns jobs for execution. Jobs may be assigned to any number of worker servers, and even the primary itself. Worker servers simply sit idle and wait for jobs to be assigned by the primary server. Workers never take over primary scheduling duties, even if the primary server goes down.

This is the simplest multi-server setup because the primary server can use local disk for all its storage. Workers do not need access to the file storage. This is the default configuration, so you don't have to change anything at all. What it means is, all the scheduling data, event categories, user accounts, sessions, plugins, job logs and other data is stored as plain JSON files on local disk. Cronicle can also be configured to use a NoSQL database such as Couchbase or Amazon S3, but this is not required.

So by default, when you run the setup script above, the current server is placed into a "Primary Group", meaning it is the only server that is eligible to become primary. If you then install Cronicle on additional servers, they will become workers only. You can change all this from the UI, but please read the next section before running multiple primary backup servers.

When installing Cronicle onto worker servers, please do not run the bin/control.sh setup script. Instead, simply copy over your conf/config.json file, and then start the service.

Cronicle also has the ability to run with one or more "backup" servers, which can become primary if need be. Failover is automatic, and the cluster negotiates who should be primary at any given time. But in order for this to work, all the primary eligible servers need access to the same storage data. This can be achieved in one of three ways:

See the Storage Configuration section below for details on these.

The other thing you'll need to do is make sure all your primary backup servers are in the appropriate server group. By default, a single "Primary Group" is created which only contains your primary primary server. Using the UI, you can simply change the hostname regular expression so it encompasses all your eligible servers, or you can just add additional groups that match each backup server. More details can be found in the Servers Tab section below.

You can run Cronicle behind a load balancer, as long as you ensure that only the primary server and eligible backup servers are in the load balancer pool. Do not include any worker-only servers, as they typically do not have access to the back-end storage system, and cannot serve up the UI.

You can then set the base_app_url configuration parameter to point to the load balancer, instead of an individual server, and also use that hostname when loading the UI in your browser.

Note that Web UI needs to make API calls and open WebSocket connections to the primary server directly, so it needs to also be accessible directly via its hostname.

You must set the web_direct_connect configuration property to true. This ensures that the Web UI will make API and WebSocket connections directly to the primary server, rather than via the load balancer hostname.

For teams setting up multi-server clusters, here are some operational concerns to keep in mind:

- All servers should have the same exact configuration file (

/opt/cronicle/conf/config.json). - All servers need to have correct clocks (timezones do not matter, clock sync does).

- Server auto-discovery happens via UDP broadcast on port 3014 (by default). This is not required.

- The primary server will also open TCP WebSocket connections to each worker on the web server port.

- Each server in the cluster needs to have a fully-qualified hostname that resolves in DNS.

- The server hostnames determine priority of which server becomes primary (alphabetical sort).

- All servers need to have unique hostnames (very bad things will happen otherwise).

- All servers need to have at least one active IPv4 interface.

- For the "live log" feature in the UI to work, the user needs a network route to the server running the job, via its hostname.

- If you have to change any server IP addresses, they'll have to be removed and re-added to the cluster.

- See the Cron Noncompliance section for differences in how Cronicle schedules events, versus the Unix Cron standard.

The main Cronicle configuration file is in JSON format, and can be found here:

/opt/cronicle/conf/config.json

Please edit this file directly. It will not be touched by any upgrades. A pristine copy of the default configuration can always be found here: /opt/cronicle/sample_conf/config.json.

Here are descriptions of the top-level configuration parameters:

This should be set to a fully-qualified URL, pointing to your Cronicle server, including the HTTP port number if non-standard. Do not include a trailing slash. This is used in e-mails to create self-referencing URLs. Example:

http://local.cronicle.com:3012

If you are running Cronicle behind a load balancer, this should be set to the load balanced virtual hostname.

The e-mail address to use as the "From" address when sending out notifications. Most SMTP servers require this to be a valid address to accept mail.

The hostname of your SMTP server, for sending mail. This can be set to 127.0.0.1 or localhost if you have sendmail running locally.

The port number to use when communicating with the SMTP server. The default is 25.

Set specific mailer options, such as SMTP SSL and authentication, passed directly to pixl-mail (and then to nodemailer). Example:

"mail_options": {

"secure": true,

"auth": { "user": "fsmith", "pass": "12345" },

"connectionTimeout": 10000,

"greetingTimeout": 10000,

"socketTimeout": 10000

}The connectionTimeout, greetingTimeout and socketTimeout properties are all expressed in milliseconds.

You can also use mail_options to use local sendmail, if you have that configured on your server. To do this, set the following properties, and tune as needed:

"mail_options": {

"sendmail": true,

"newline": "unix",

"path": "/usr/sbin/sendmail"

}You can omit smtp_hostname and smtp_port if you are using sendmail.

For multi-server setups, all your Cronicle servers need to share the same secret key. Any randomly generated string is fine.

The install script will automatically set to this to a random string for you, but you are free to change it to anything you want. Just make sure all your servers have the same shared secret key. This is used to authenticate internal server-to-server API requests.

The directory where logs will be written, before they are archived. This can be a partial path, relative to the Cronicle base directory (/opt/cronicle) or a full path to a custom location. It defaults to logs (i.e. /opt/cronicle/logs).

The filename to use when writing logs. You have three options here: a single combined log file for all logs, multiple log files for each component, or multiple log files for each category (debug, transaction, error). See the Logs section below for details.

This is an array of column IDs to log. You are free to reorder or remove some of these, but do not change the names. They are specific IDs that match up to log function calls in the code. See the Logs section below for details.

Every night at midnight (local server time), the logs can be archived (gzipped) to a separate location. This parameter specifies the path, and the directory naming / filenaming convention of the archive files. It can utilize date placeholders including [yyyy], [mm] and [dd].

This can be a partial path, relative to the Cronicle base directory (/opt/cronicle) or a full path to a custom location. It defaults to logs/archives/[yyyy]/[mm]/[dd]/[filename]-[yyyy]-[mm]-[dd].log.gz.

Your job logs (i.e. the output from Plugins) are stored separately from the main Cronicle log files. They are actually written to the Cronicle storage system, and made available in the UI. This parameter allows you to copy them to a separate directory on disk, presumably for some kind of log processing system (such as Splunk) to then pick them up, index them, etc. It is optional, and defaults to disabled.

This can be a partial path, relative to the Cronicle base directory (/opt/cronicle) or a full path to a custom location.

The queue directory is used internally for misc. background tasks, such as handling detached jobs sending messages back to the daemon. You shouldn't ever have to deal with this directly, and the directory is auto-created on install.

This can be a partial path, relative to the Cronicle base directory (/opt/cronicle) or a full path to a custom location.

The PID file is simply a text file containing the Process ID of the main Cronicle daemon. It is used by the control.sh script to stop the daemon, and detect if it is running. You should never have to deal with this file directly, and it defaults to living in the logs directory which is auto-created.

This can be a partial path, relative to the Cronicle base directory (/opt/cronicle) or a full path to a custom location. However, it should probably not be changed, as the control.sh script expects it to live in logs/cronicled.pid.

The level of verbosity in the debug logs. It ranges from 1 (very quiet) to 10 (extremely loud). The default value is 9.

Cronicle needs to run storage maintenance once per day, which generally involves deleting expired records and trimming lists which have grown too large. This only runs on the primary server, and typically only takes a few seconds, depending on the number of events you have. The application is still usable during this time, but UI performance may be slightly impacted.

By default the maintenance is set to run at 4:00 AM (local server time). Feel free to change this to a more convenient time for your server environment. The format of the parameter is HH:MM.

This parameter controls how many items are kept in historical lists such as the Activity Log, Completed Jobs, and Event History. When this limit is exceeded, the oldest entries are removed during the nightly maintenance run. The default limit is 10000 items.

This has no real effect on performance -- only space on disk (or Couchbase / S3).

Completed job data is only kept around for this number of days. This includes job logs and the metadata for each completed job (stats, etc.). The default value is 180 days, but feel free to tune this for your own environment.

This has no real effect on performance -- only space on disk (or Couchbase / S3).

This is the number of seconds to allow child processes to exit after sending a TERM signal (for aborted jobs, server shutdown, etc.). If they do not exit within the specified timeout, a KILL signal is sent. The default value is 10 seconds.

When the primary server loses connectivity with a worker that had running jobs on it, they go into a "limbo" state for a period of time, before they are finally considered lost. The dead_job_timeout parameter specifies the amount of time before these wayward jobs are aborted (and possibly retried, depending on the event settings). The default value is 120 seconds.

This parameter exists because certain networks may have unreliable connections between servers, and it is possible a server may drop for a few seconds, then come right back. If a short hiccup like that occurs, you probably don't want to abort all the running jobs right away. Also, when you are upgrading Cronicle itself, you don't want detached jobs to be interrupted.

The worst case scenario is that a remote server with running jobs goes MIA for longer than the dead_job_timeout, the primary server aborts all the jobs, then the server reappears and finishes the jobs. This creates a bit of a mess, because the jobs are reported as both errors and successes. The latter success prevails in the end, but the errors stay in the logs and event history.

For multi-server clusters, this specifies how often the primary server should send out pings to worker servers, to let them know who is the boss. The default is 20 seconds.

For multi-server clusters, this specifies how long to wait after receiving a ping, before a backup server considers the primary server to be dead. At this point a new primary server will be chosen. The default value is 60 seconds.

For auto-discovery of nearby servers, this specifies the UDP port to use for broadcasting. Do not worry if your network doesn't support UDP broadcast, as this is just an optional feature where nearby servers will show up in the UI. The default port is 3014.

When the scheduler first starts up on the primary server, it waits for a few seconds before actually assigning jobs. This is to allow all the servers in the cluster to check in and register themselves with the primary server. The default value is 10 seconds, which should be plenty of time.

Once a server becomes primary, it should immediately attempt to connect to all remote servers right away. So in theory this grace period could be as short as 1 second or less, but a longer delay allows for any random network connectivity errors to work themselves out.

While you can specify a web hook in the UI per each category and/or per each event, this parameter allows you to define a universal one, which is always fired for every job regardless of UI settings. It should be a fully-qualified URL to an API endpoint that accepts an HTTP POST containing JSON data.

Web hooks are fired at the start and the end of each job (success or fail). A JSON record is sent in the HTTP POST body, which contains all the relevant information about the job, including an action property, which will be set to job_start at the start and job_complete at the end of the job. See the Web Hooks section below for more on the data format.

To include custom HTTP request headers with your web hook, append them onto the end of the URL using this format: [header: My-Header: My-Value]. Make sure to include a space before the opening bracket. Example URL:

https://myserver.com/api/chat.postMessage [header: My-Header: My-Value]

If you need to include custom JSON data with the web hook HTTP POST, you can do so by specifying a web_hook_custom_data property, and any keys/values will be merged in with the event data as it is sent to the web hook URL. Example:

"web_hook_custom_data": {

"my_custom_key1": "My custom value 1",

"my_custom_key2": "My custom value 2"

}In this example my_custom_key1 and my_custom_key2 will be merged in with the event data that usually accompanies the web hook post data. See the Web Hooks section below for more on the data format.

If you need to customize the low-level properties sent to the Node.js http.request method for making outbound web hook requests, use the web_hook_custom_opts property. Using this you can set things like a proxy host and port. Example use:

"web_hook_custom_opts": {

"host": "my-corp-proxy.com",

"port": 8080

}See the http.request docs for all the possible properties you can set here.

The web hook JSON POST data includes a text property which is a simple summary of the action taking place, which is compatible with Slack Webhook Integrations. These text strings are generated based on the action, and use the following templates:

"web_hook_text_templates": {

"job_start": "Job started on [hostname]: [event_title] [job_details_url]",

"job_complete": "Job completed successfully on [hostname]: [event_title] [job_details_url]",

"job_failure": "Job failed on [hostname]: [event_title]: Error [code]: [description] [job_details_url]",

"job_launch_failure": "Failed to launch scheduled event: [event_title]: [description] [edit_event_url]"

}You can customize these text strings by including a web_hook_text_templates object in your configuration, and setting each of the action properties within. Also, you can use this to disable any of the web hook actions, by simply removing certain action keys. For example, if you don't want to fire a web hook for starting a job, remove the job_start key. If you only want web hooks to fire for errors, remove both the job_start and job_complete keys.

The text string templates can use any data values from the web hook JSON data by inserting [square_bracket] placeholders. See the Web Hooks section below for more on the data format, and which values are available.

If you are having trouble getting HTTPS web hooks or SSL SMTP e-mails to work, you might need to set ssl_cert_bypass to true. This causes Node.js to blindly accept all SSL connections, even when it cannot validate the SSL certificate. This effectively sets the following environment variable at startup:

process.env.NODE_TLS_REJECT_UNAUTHORIZED = "0";Please only do this if you understand the security ramifications, and completely trust the host(s) you are connecting to, and the network you are on. Skipping the certificate validation step should really only be done in special circumstances, such as trying to hit one of your own internal servers with a self-signed cert.

For legacy compatibility, the old web_hook_ssl_cert_bypass property is still accepted, and has the same effect as ssl_cert_bypass.

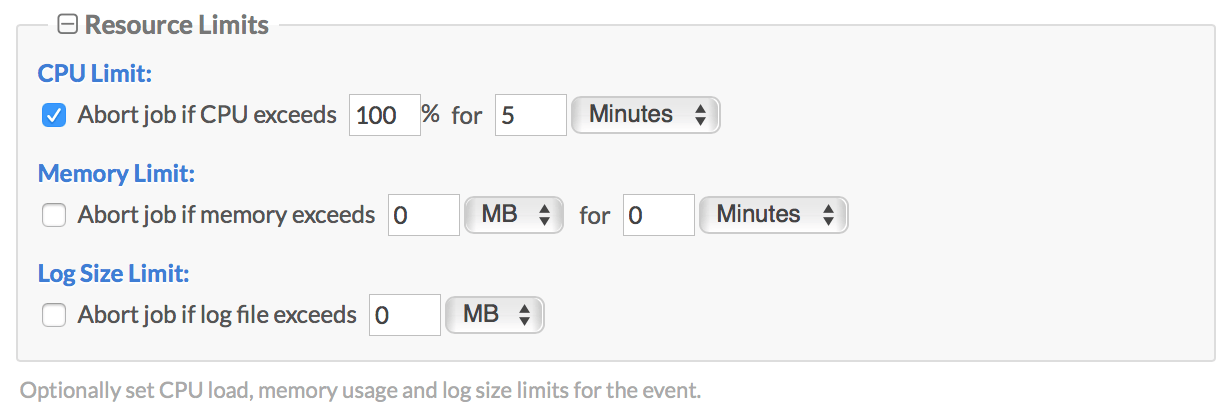

This parameter allows you to set a default memory usage limit for jobs, specified in bytes. This is measured as the total usage of the job process and any sub-processes spawned or forked by the main process. If the memory limit is exceeded, the job is aborted. The default value is 1073741824 (1 GB). To disable set it to 0.

Memory limits can also be customized in the UI per each category and/or per each event (see Event Resource Limits below). Doing either overrides the primary default.

When using the job_memory_max feature, you can optionally specify how long a job is allowed exceed the maximum memory limit until it is aborted. For example, you may want to allow jobs to spike over 1 GB of RAM, but not use it sustained for more a certain amount of time. That is what the job_memory_sustain property allows, and it accepts a value in seconds. It defaults to 0 (abort instantly when exceeded).

Memory limits can also be customized in the UI per each category and/or per each event (see Event Resource Limits below). Doing either overrides the default.

This parameter allows you to set a default CPU usage limit for jobs, specified in percentage of one CPU core. This is measured as the total CPU usage of the job process and any sub-processes spawned or forked by the main process. If the CPU limit is exceeded, the job is aborted. The default value is 0 (disabled). For example, to allow jobs to use up to 2 CPU cores, specify 200 as the limit.

CPU limits can also be customized in the UI per each category and/or per each event (see Event Resource Limits below). Doing either overrides the default.

When using the job_cpu_max feature, you can optionally specify how long a job is allowed exceed the maximum CPU limit until it is aborted. For example, you may want to allow jobs to use up to 2 CPU cores, but not use them sustained for more a certain amount of time. That is what the job_cpu_sustain property allows, and it accepts a value in seconds. It defaults to 0 (abort instantly when exceeded).

CPU limits can also be customized in the UI per each category and/or per each event (see Event Resource Limits below). Doing either overrides the default.

This parameter allows you to set a default log file size limit for jobs, specified in bytes. If the file size limit is exceeded, the job is aborted. The default value is 0 (disabled).

Job log file size limits can also be customized in the UI per each category and/or per each event (see Event Resource Limits below). Doing either overrides the default.

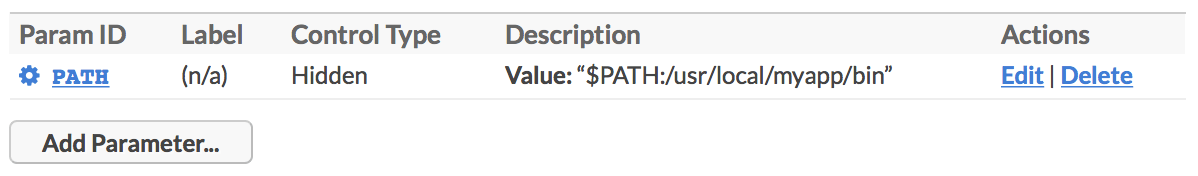

Place any key/value pairs you want into the job_env object, and they will become environment variables passed to all job processes, as they are spawned. Note that these can be overridden by event parameters with the same names. The job_env can be thought of as a way to specify universal default environment variables for all your jobs. Example:

"job_env": {

"TZ": "America/Los_Angeles",

"LANG": "en_US.UTF-8"

},Setting this parameter to true will force the Cronicle servers to connect to each other using hostnames rather than LAN IP addresses. This is mainly for special situations where your local server IP addresses may change, and you would prefer to rely on DNS instead. The default is false (disabled), meaning connect using IP addresses.

When this property is set to false (which is the default), the Cronicle Web UI will connect to whatever hostname/port is on the URL. It is expected that this hostname/port will always resolve to your primary server. This is useful for single server setups, situations when your users do not have direct access to your Cronicle servers via their IPs or hostnames, or if you are running behind some kind of reverse proxy.

If you set this parameter to true, then the Cronicle web application will connect directly to your individual Cronicle servers. This is more for multi-server configurations, especially when running behind a load balancer with multiple backup servers. The Web UI must always connect to the primary server, so if you have multiple backup servers, it needs a direct connection.

Note that the ability to watch live logs for active jobs requires a direct web socket connection to the server running the job. For that feature, this setting has no effect (it always attempts to connect directly).

Setting this parameter to true will force Cronicle's Web UI to connect to the back-end servers using their hostnames rather than IP addresses. This includes both AJAX API calls and Websocket streams. You should only need to enable this in special situations where your users cannot access your servers via their LAN IPs, and you need to proxy them through a hostname (DNS) instead. The default is false (disabled), meaning connect using IP addresses.

This property only takes effect if web_direct_connect is also set to true.

This is an advanced configuration property that you will probably never need to worry about. This allows you to customize the socket.io transports used to connect to the server for real-time updates. By default, this property is set internally to an array containing the websocket transport only, e.g.

"socket_io_transports": ["websocket"]However, if you are trying to run Cronicle in an environment where WebSockets are not allowed (perhaps an ancient firewall or proxy), you can change this array to contain the polling transport first, e.g.

"socket_io_transports": ["polling", "websocket"]However, please only do this if you know exactly what you are doing, and why.

You can optionally set a global maximum number of concurrent jobs to allow. This is across all servers and categories, and is designed as an "emergency brake" for runaway events. The property is called max_jobs. The default is 0 (no limit). Example:

"max_jobs": 256The Storage object contains settings for the Cronicle storage system. This is built on the pixl-server-storage module, which can write everything to local disk (the default), Couchbase or Amazon S3.

To select a storage engine, place one of the following values into the engine property:

The default storage method is to use local disk (can also be an NFS mount, for multi-server setups with failover support). For this, set the engine property to Filesystem, and declare a sub-object with the same name, with a couple more properties:

{

"Storage": {

"engine": "Filesystem",

"Filesystem": {

"base_dir": "data",

"key_namespaces": 1

}

}

}The base_dir is the base directory to store everything under. It can be a fully-qualified filesystem path, or a relative path to the Cronicle base directory (e.g. /opt/cronicle). In this case it will be /opt/cronicle/data.

For more details on using the Filesystem as a backing store, please read the Local Filesystem section in the pixl-server-storage docs.

Please note that as of this writing, Cronicle only supports Couchbase Client v2, so you need to force install version 2.6.12 (see instructions below). Work is underway to support the v3 API, which has many breaking changes.

To use Couchbase as a backing store for Cronicle, please read the Couchbase section in the pixl-server-storage docs. It has complete details for how to setup the storage object. Example configuration:

{

"Storage": {

"engine": "Couchbase",

"Couchbase": {

"connectString": "couchbase://127.0.0.1",

"bucket": "default",

"username": "",

"password": "",

"serialize": false,

"keyPrefix": "cronicle"

}

}

}If you are sharing a bucket with other applications, use the keyPrefix property to keep the Cronicle data separate, in its own "directory". For example, set keyPrefix to "cronicle" to keep all the Cronicle-related records in a top-level "cronicle" directory in the bucket.

Note that for Couchbase Server v5.0+ (Couchbase Node SDK 2.5+), you will have to supply both a username and password for a valid user created in the Couchbase UI. Prior to v5+ you could omit the username and only specify a password, or no password at all if your bucket has no authentication.

You'll also need to install the npm couchbase module, but it must be version 2:

cd /opt/cronicle

npm install [email protected]

After configuring Couchbase, you'll need to run the Cronicle setup script manually, to recreate all the base storage records needed to bootstrap the system:

/opt/cronicle/bin/control.sh setup

To use Amazon S3 as a backing store for Cronicle, please read the Amazon S3 section in the pixl-server-storage docs. It has complete details for how to setup the storage object. Example configuration:

{

"Storage": {

"engine": "S3",

"AWS": {

"accessKeyId": "YOUR_AMAZON_ACCESS_KEY",

"secretAccessKey": "YOUR_AMAZON_SECRET_KEY",

"region": "us-west-1",

"correctClockSkew": true,

"maxRetries": 5,

"httpOptions": {

"connectTimeout": 5000,

"timeout": 5000

}

},

"S3": {

"keyPrefix": "cronicle",

"fileExtensions": true,

"params": {

"Bucket": "YOUR_S3_BUCKET_ID"

}

}

}

}If you are sharing a bucket with other applications, use the keyPrefix property to keep the Cronicle data separate, in its own "directory". For example, set keyPrefix to "cronicle" to keep all the Cronicle-related records in a top-level "cronicle" directory in the bucket. A trailing slash will be automatically added to the prefix if missing.

It is recommended that you always set the S3 fileExtensions property to true for new installs. This makes the Cronicle S3 records play nice with sync / copy tools such as Rclone. See Issue #60 for more details. Do not change this property on existing installs -- use the Storage Migration Tool.

To use S3 you'll also need to install the npm aws-sdk module:

cd /opt/cronicle

npm install aws-sdk

After configuring S3, you'll need to run the Cronicle setup script manually, to recreate all the base storage records needed to bootstrap the system:

/opt/cronicle/bin/control.sh setup

If you're worried about Amazon S3 costs, you probably needn't. With a typical setup running ~30 events per hour (about ~25,000 events per month), this translates to approximately 350,000 S3 PUTs plus 250,000 S3 GETs, or about $2 USD per month. Add in 100GB of data storage and it's another $3.

Cronicle has an embedded web server which handles serving up the user interface, as well as some server-to-server communication that takes place between the primary and workers. This is configured in the WebServer object, and there are only a handful of parameters you should ever need to configure:

{

"WebServer": {

"http_port": 3012,

"https": 0,

"https_port": 3013,

"https_cert_file": "conf/ssl.crt",

"https_key_file": "conf/ssl.key"

}

}Changing the http_port is probably the most common thing you will want to customize. For example, if you don't have anything else running on port 80, you will probably want to change it to that, so you can access the UI without entering a port number.

This is also where you can enable HTTPS, if you want the UI to be SSL encrypted. Set the https property to 1 to enable, and configure the https_port as you see fit (the standard HTTPS port is 443). You will have to supply your own SSL certificate files (sample self-signed certs are provided for testing, but they will generate browser warnings).

For more details on the web server component, please see the pixl-server-web module documentation.

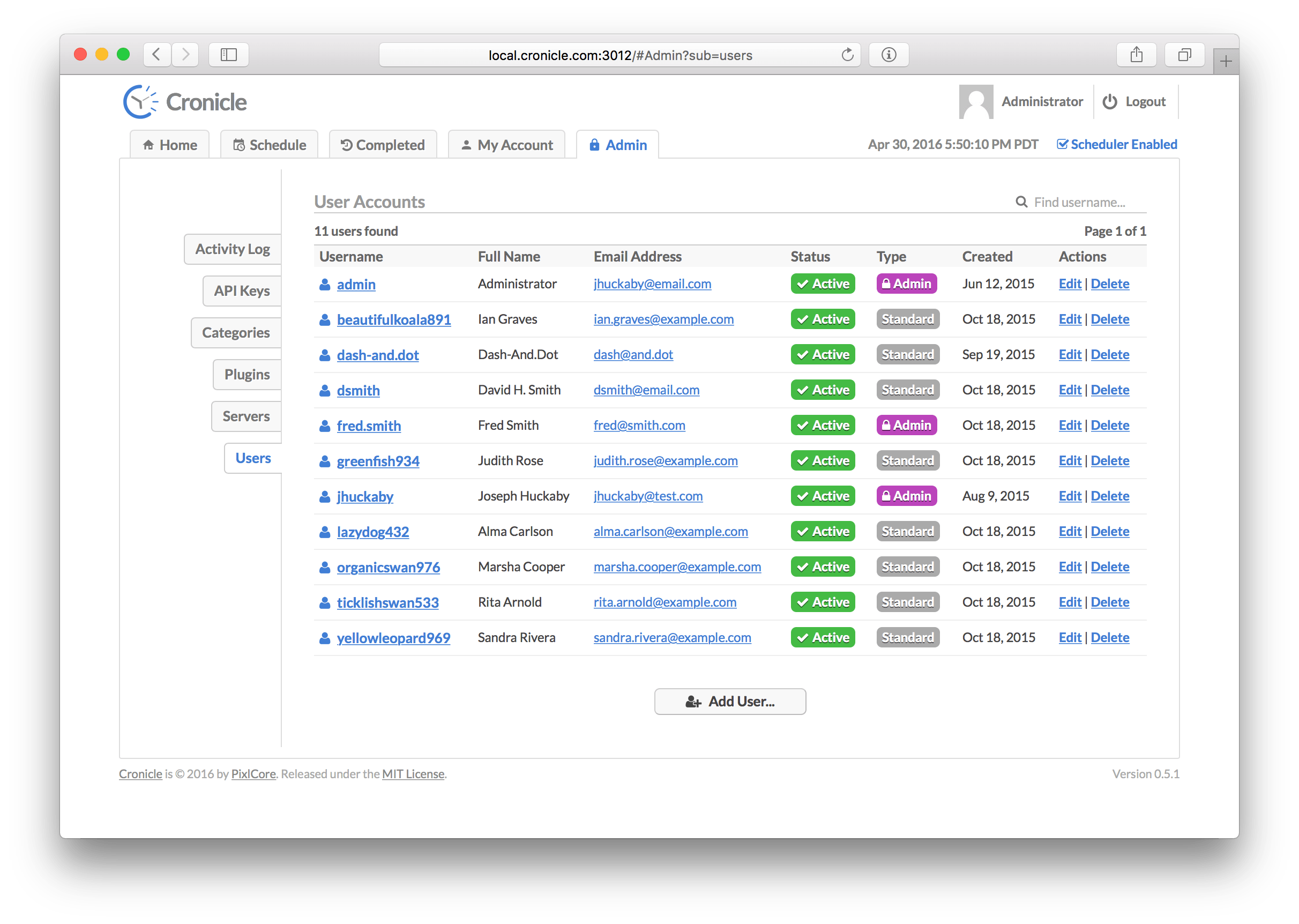

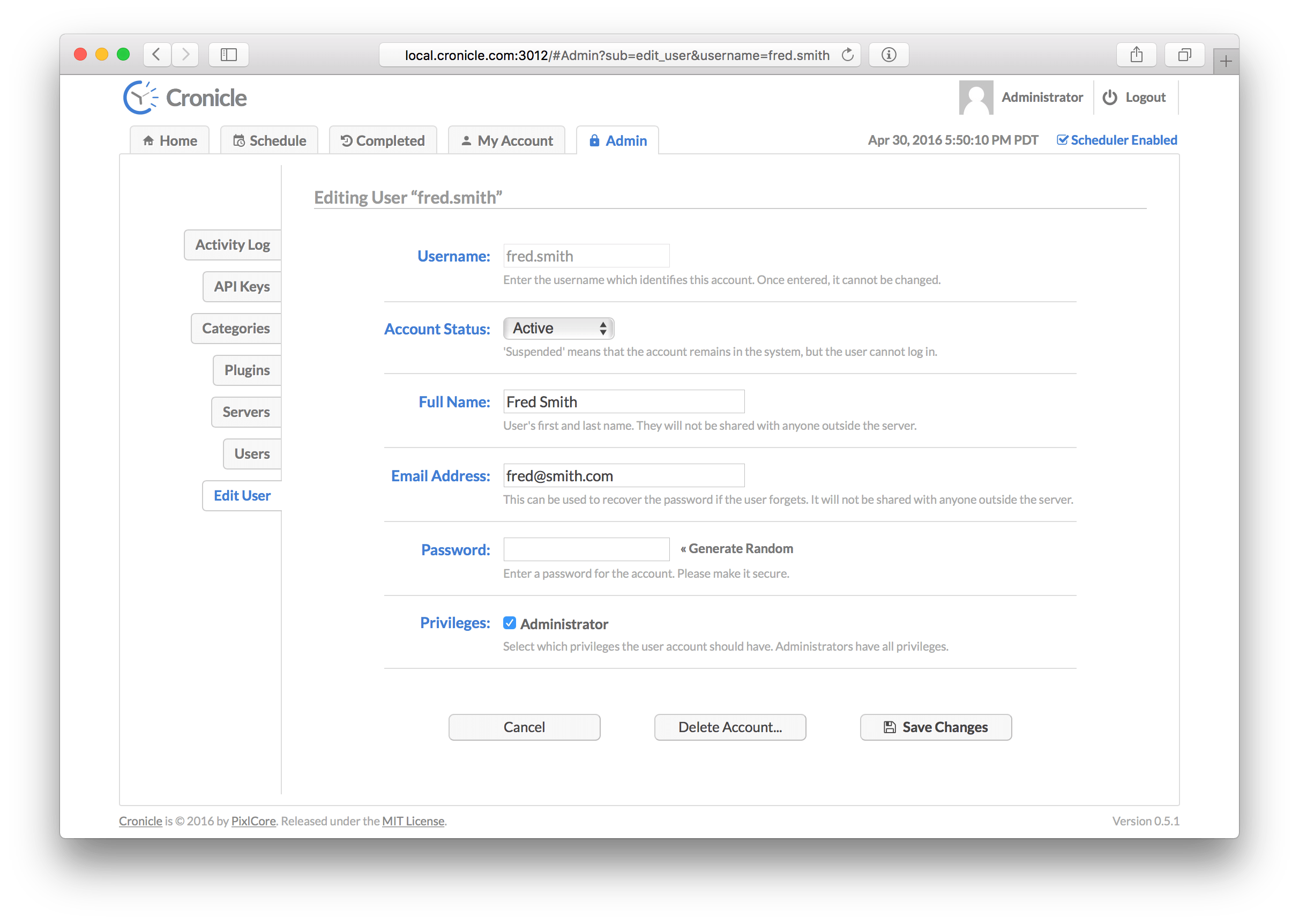

Cronicle has a simple user login and management system, which is built on the pixl-server-user module. It handles creating new users, assigning permissions, and login / session management. It is configured in the User object, and there are only a couple of parameters you should ever need to configure:

{

"User": {

"free_accounts": 0,

"default_privileges": {

"admin": 0,

"create_events": 1,

"edit_events": 1,

"delete_events": 1,

"run_events": 0,

"abort_events": 0,

"state_update": 0

}

}

}The free_accounts property specifies whether guests visiting the UI can create their own accounts, or not. This defaults to 0 (disabled), but you can set it to 1 to enable. This feature should only be used when your install of Cronicle is running on a private network, and you trust all your employees.

The default_privileges object specifies which privileges new accounts will receive by default. Here is a list of all the possible privileges and what they mean:

| Privilege ID | Description |

|---|---|

admin |

User is a full administrator. This automatically grants ALL privileges, current and future. |

create_events |

User is allowed to create new events and add them to the schedule. |

edit_events |

User is allowed to edit and save events -- even those created by others. |

delete_events |

User is allowed to delete events -- event those created by others. |

run_events |

User is allowed to run events on-demand by clicking the "Run" button in the UI. |

abort_events |

User is allowed to abort jobs in progress, even those for events created by others. |

state_update |

User is allowed to enable or disable the primary scheduler. |

By default new users have the create_events, edit_events and delete_events privileges, and nothing else. Note that when an administrator creates new accounts via the UI, (s)he can customize the privileges at that point. The configuration only sets the defaults.

For more details on the user manager component, please see the pixl-server-user module documentation.

Cronicle will send a number of different types of e-mails in response to certain events. These are mostly confirmations of actions, or just simple notifications. Most of these can be disabled in the UI if desired. The e-mail content is also configurable, including the From and Subject headers, and is based on plain text e-mail template files located on disk:

| Action | Email Template | Description |

|---|---|---|

| New User Account | conf/emails/welcome_new_user.txt |

Sent when a new user account is created. |

| Changed Password | conf/emails/changed_password.txt |

Sent when a user changes their password. |

| Recover Password | conf/emails/recover_password.txt |

Sent when a user requests password recovery. |

| Job Succeeded | conf/emails/job_success.txt |

Conditionally sent when a job completes successfully (depends on event configuration). |

| Job Failed | conf/emails/job_fail.txt |

Conditionally sent when a job fails (depends on event configuration). |

| Event Error | conf/emails/event_error.txt |

Sent when a job fails to launch (depends on event configuration). |

Feel free to edit these files to your liking. Note that any text in [/square_brackets] is a placeholder which gets swapped out with live data relevant to the event which fired off the e-mail.

Here is an example e-mail template file:

To: [/notify_success]

From: [/config/email_from]

Subject: Cronicle Job Completed Successfully: [/event_title]

Date/Time: [/nice_date_time]

Event Title: [/event_title]

Category: [/category_title]

Server Target: [/nice_target]

Plugin: [/plugin_title]

Job ID: [/id]

Hostname: [/hostname]

PID: [/pid]

Elapsed Time: [/nice_elapsed]

Performance Metrics: [/perf]

Avg. Memory Usage: [/nice_mem]

Avg. CPU Usage: [/nice_cpu]

Job Details:

[/job_details_url]

Job Debug Log ([/nice_log_size]):

[/job_log_url]

Edit Event:

[/edit_event_url]

Description:

[/description]

Event Notes:

[/notes]

Regards,

The Cronicle Team

The stock e-mail templates shipped with Cronicle are plain text, but you can provide your own rich HTML e-mail templates if you want. Simply start the e-mail body content (what comes after the Subject line) with an HTML open tag, e.g. <div>, and the e-mails will be sent as HTML instead of text.

You can include any property from the main conf/config.json file by using the syntax [/config/KEY]. Also, to include environment variables, use the syntax [/env/ENV_KEY], for example [/env/NODE_ENV].

This section describes the Cronicle web user interface. It has been tested extensively in Safari, Chrome and Firefox. Recent versions of IE should also work (11 and Edge).

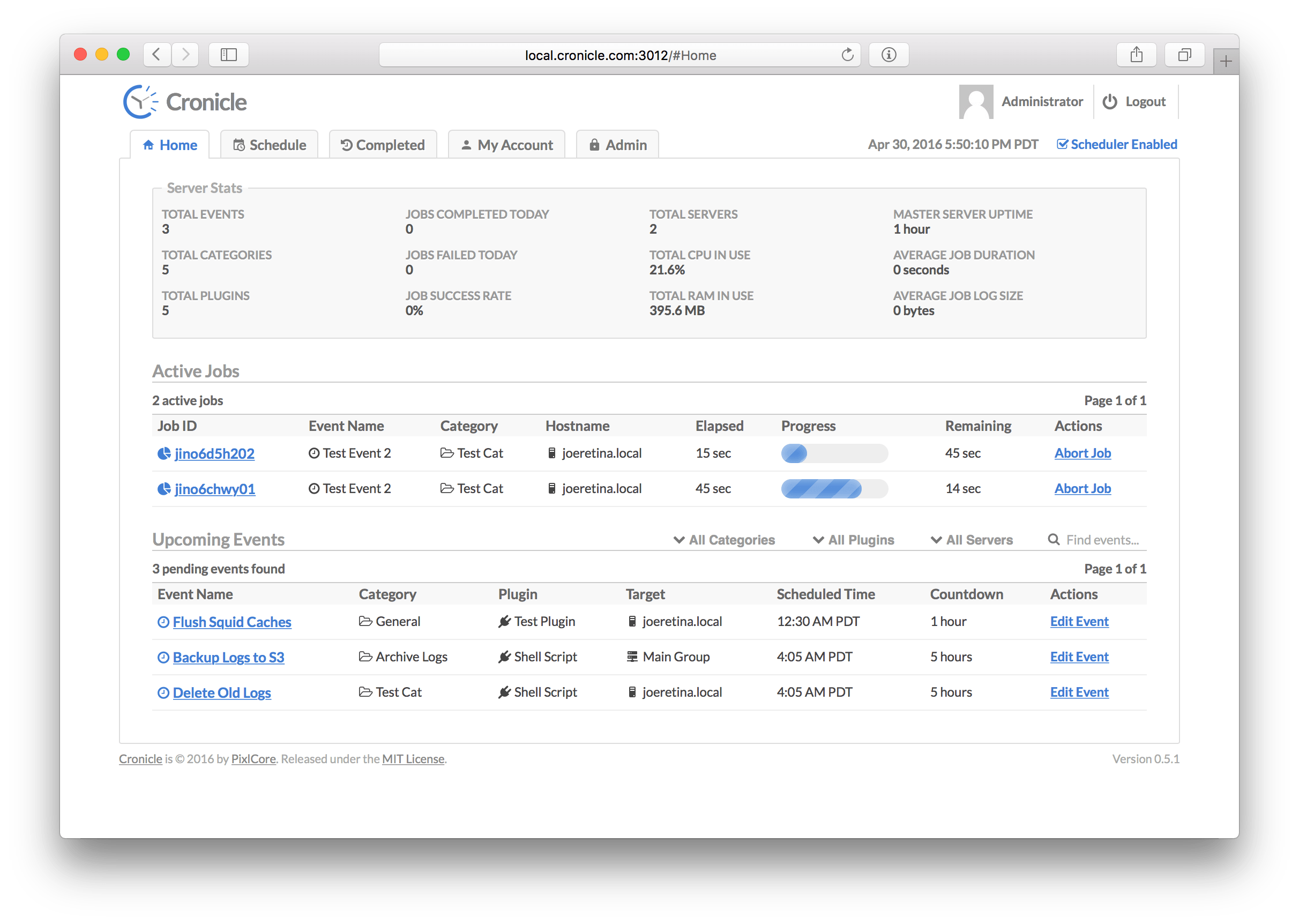

The Home tab, also known as the dashboard, is the default page shown after you log in. It displays basic information about the application, currently active (running) jobs, and a list of upcoming jobs in the next 24 hours. The page is split vertically into three main sections:

This section contains a summary of various Cronicle stats. Some are current totals, and others are daily totals. Here is a list of everything that is displayed:

| Statistic | Description |

|---|---|

| Total Events | Current number of active events in the schedule. |

| Total Categories | Current number of event categories in the system. |

| Total Plugins | Current number of registered Plugins in the system. |

| Jobs Completed Today | Number of jobs completed today (resets at midnight, local server time). |

| Jobs Failed Today | Number of jobs that failed today (resets at midnight, local server time). |

| Job Success Rate | Percentage of completed jobs that succeeded today (resets at midnight, local server time). |

| Total Servers | Current total number of servers in the Cronicle cluster. |

| Total CPU in Use | Current total CPU in use (all servers, all jobs, all processes). |

| Total RAM in Use | Current total RAM in use (all servers, all jobs, all processes). |

| Primary Server Uptime | Elapsed time since Cronicle on the primary server was restarted. |

| Average Job Duration | The average elapsed time for all completed jobs today (resets at midnight, local server time). |

| Average Job Log Size | The average job log file size for all completed jobs today (resets at midnight, local server time). |

This table lists all the currently active (running) jobs, and various information about them. The table columns are:

| Column | Description |

|---|---|

| Job ID | A unique ID assigned to the job. Click this to see live job progress (see Job Details Tab below). |

| Event Name | The name of the scheduled event which started the job. |

| Category | The category to which the event is assigned. |

| Hostname | The server hostname which is running the job. |

| Elapsed | The current elapsed time since the job started. |

| Progress | A visual representation of the job's progress, if available. |

| Remaining | The estimated remaining time, if available. |

| Actions | Click Abort to cancel the job. |

This table lists all the upcoming scheduled events in the next 24 hours, and various information about them. The table columns are:

| Column | Description |

|---|---|

| Event Name | The name of the scheduled event. Click this to edit the event (see Edit Event Tab below). |

| Category | The category to which the event is assigned. |

| Plugin | The Plugin which will be loaded to run the event. |

| Target | The server target (server group or individual server hostname) which will run the event. |

| Scheduled Time | When the event is scheduled to run (in your local timezone unless otherwise specified). |

| Countdown | How much time remains until the event runs. |

| Actions | Click Edit Event to edit the event (see Edit Event Tab below). |

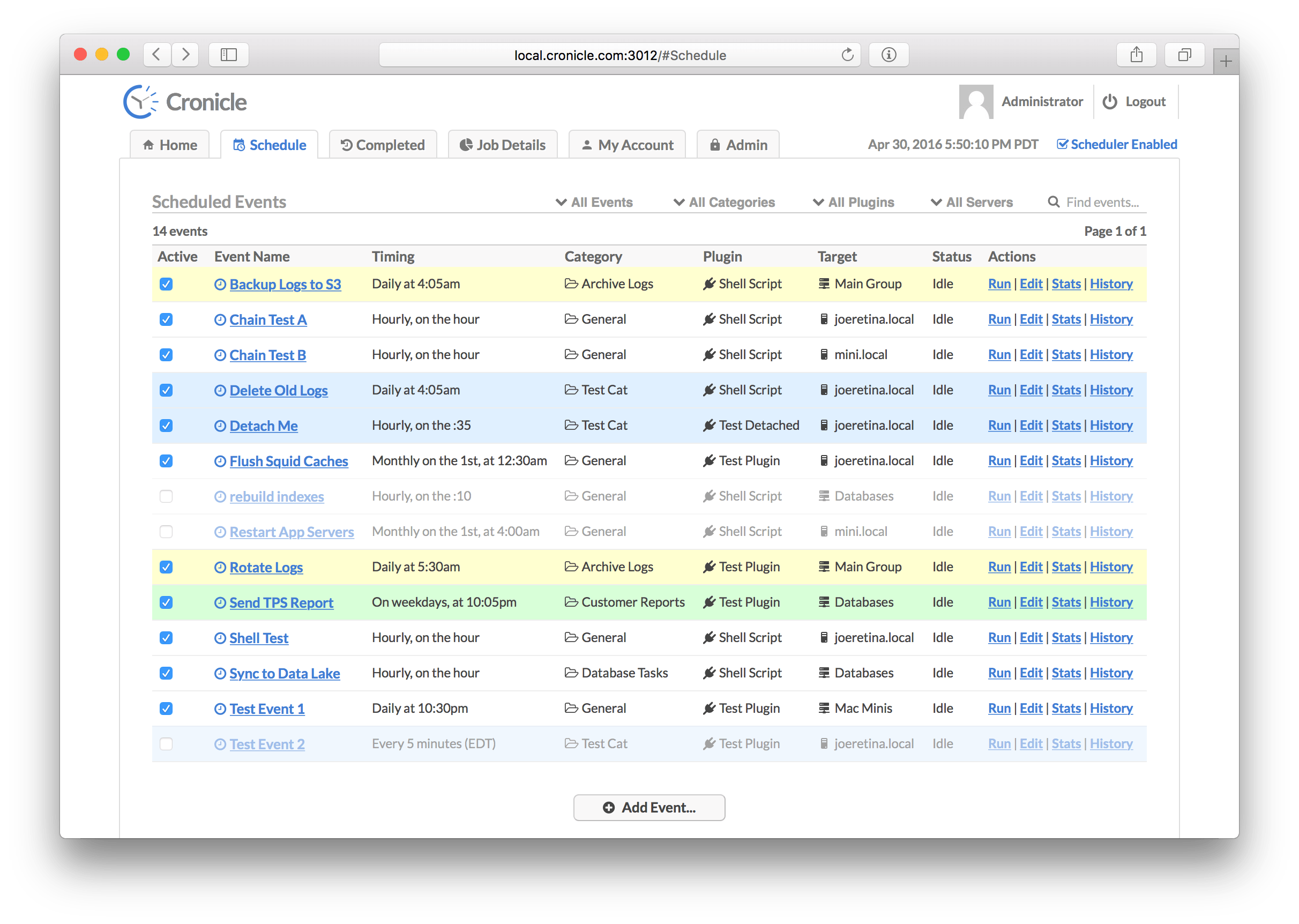

This tab displays the list of all events currently in the schedule, including both active and disabled events. From here you can add new events, edit existing events, run events on-demand, and jump to locations such as Event History and Event Stats. The schedule table has the following columns:

| Column | Description |

|---|---|

| Active | This checkbox indicates whether the event is active or disabled. Click it to toggle the state. |

| Event Name | The name of the scheduled event. Click this to edit the event (see Edit Event Tab below). |

| Timing | A summary of the event's timing settings (daily, hourly, etc.). |

| Category | The category to which the event is assigned. |

| Plugin | The Plugin which will be loaded to run the event. |

| Target | The server target (server group or individual server hostname) which will run the event. |

| Status | Current status of the event (idle or number of running jobs). |

| Actions | A list of actions to run on the event. See below. |

Here are the actions you can run on each event from the Schedule tab:

| Action | Description |

|---|---|

| Run | Immediately runs the event (starts an on-demand job), regardless of the event's timing settings. |

| Edit | This jumps over to the Edit Event Tab to edit the event. |

| Stats | This jumps over to the Event Stats Tab to see statistics about the event and past jobs. |

| History | This jumps over to the Event History Tab to see the event's history of completed jobs. |

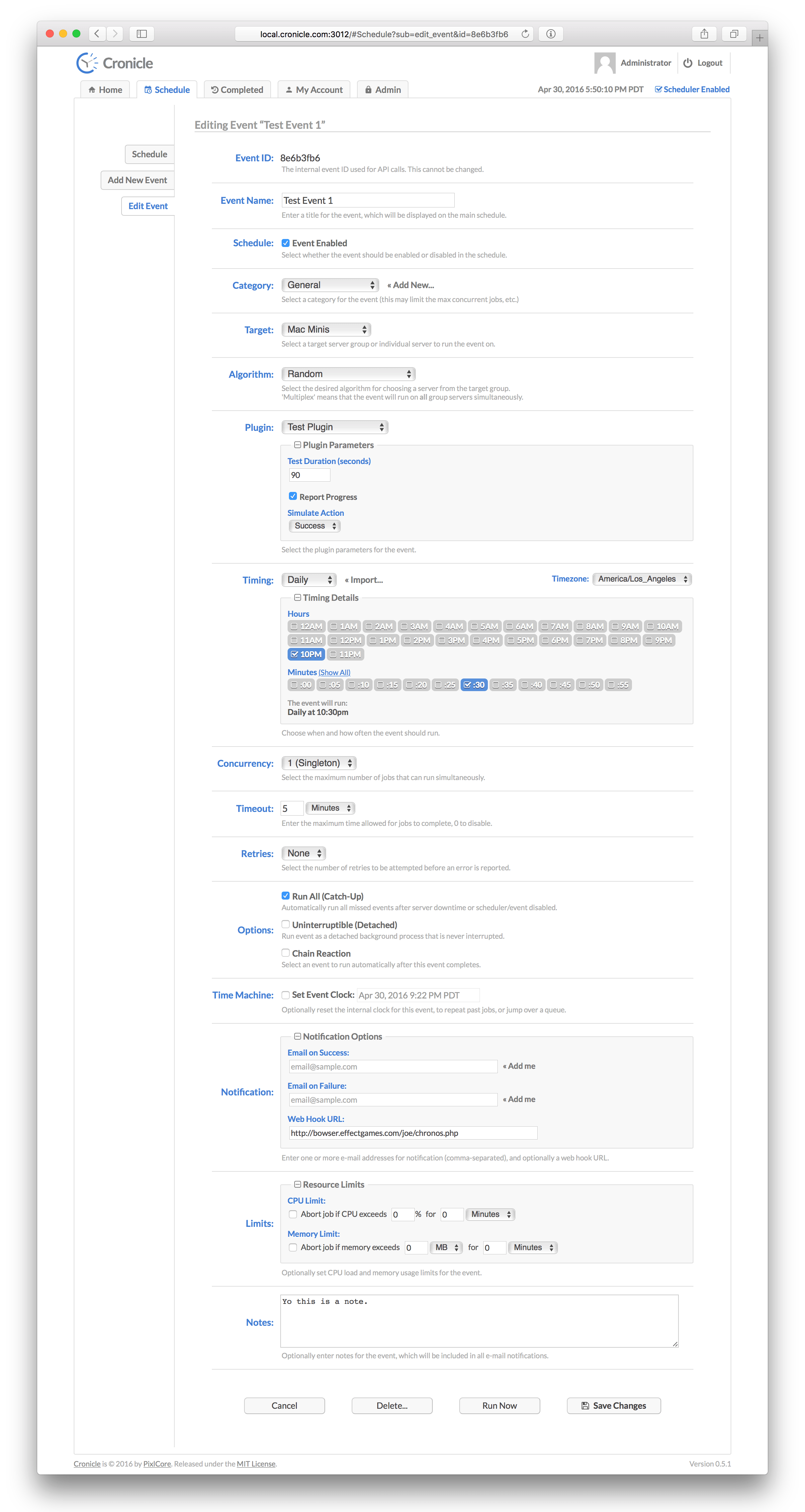

The Edit Event Tab displays a form for editing scheduled events, and creating new ones. Here are all the form fields and what they mean:

Each event has a unique ID which is used when making API calls, and can be ignored otherwise. This is only displayed when editing events.

Each event has a name, which can be anything you like. It is displayed on the Schedule tab, and in reports, e-mails, etc.

This checkbox specifies whether the event is enabled (active) in the scheduler, and will fire off jobs according to the Event Timing, or disabled. If disabled, you can still run on-demand jobs by clicking the "Run Now" button.

All events are assigned to a particular category. If you don't want to create categories, just assign your events to the provided "General" category. Categories can define limits such as max concurrent jobs, max RAM per job and max CPU per job. See the Categories Tab below for more details on creating categories.

In a multi-server cluster, events can be targeted to run on individual servers, or server groups. Both are listed in the drop-down menu. If a server group is targeted, one of the group's servers is chosen each time the event runs a job. You can decide which algorithm to use for picking servers from the group (see below). Also, see the Servers Tab for more details on creating server groups.

When you target a server group for your event, a supplementary menu appears to select an "algorithm". This is simply the method by which Cronicle picks a server in the group to run your job. The default is "Random" (i.e. select a random server from the group for each job), but there are several others as well:

| Algorithm ID | Algorithm Name | Description |

|---|---|---|

random |

Random | Pick a random server from the group. |

round_robin |

Round Robin | Pick each server in sequence (alphabetically sorted). |

least_cpu |

Least CPU Usage | Pick the server with the least amount of CPU usage in the group. |

least_mem |

Least Memory Usage | Pick the server with the least amount of memory usage in the group. |

prefer_first |

Prefer First | Prefer the first server in the group (alphabetically sorted), only picking alternatives if the first server is unavailable. |

prefer_last |

Prefer Last | Prefer the last server in the group (alphabetically sorted), only picking alternatives if the last server is unavailable. |

multiplex |

Multiplex | Run the event on all servers in the group simultaneously (see below). |

If the event targets a server group, you have the option of "multiplexing" it. That is, the event will run jobs on all the servers in the group at once, rather than picking one server at random.

When this feature is enabled, an optional "Stagger" text field will appear. This allows you to stagger (progressively delay) the launch of jobs across the servers, so they don't all start at the same exact time.

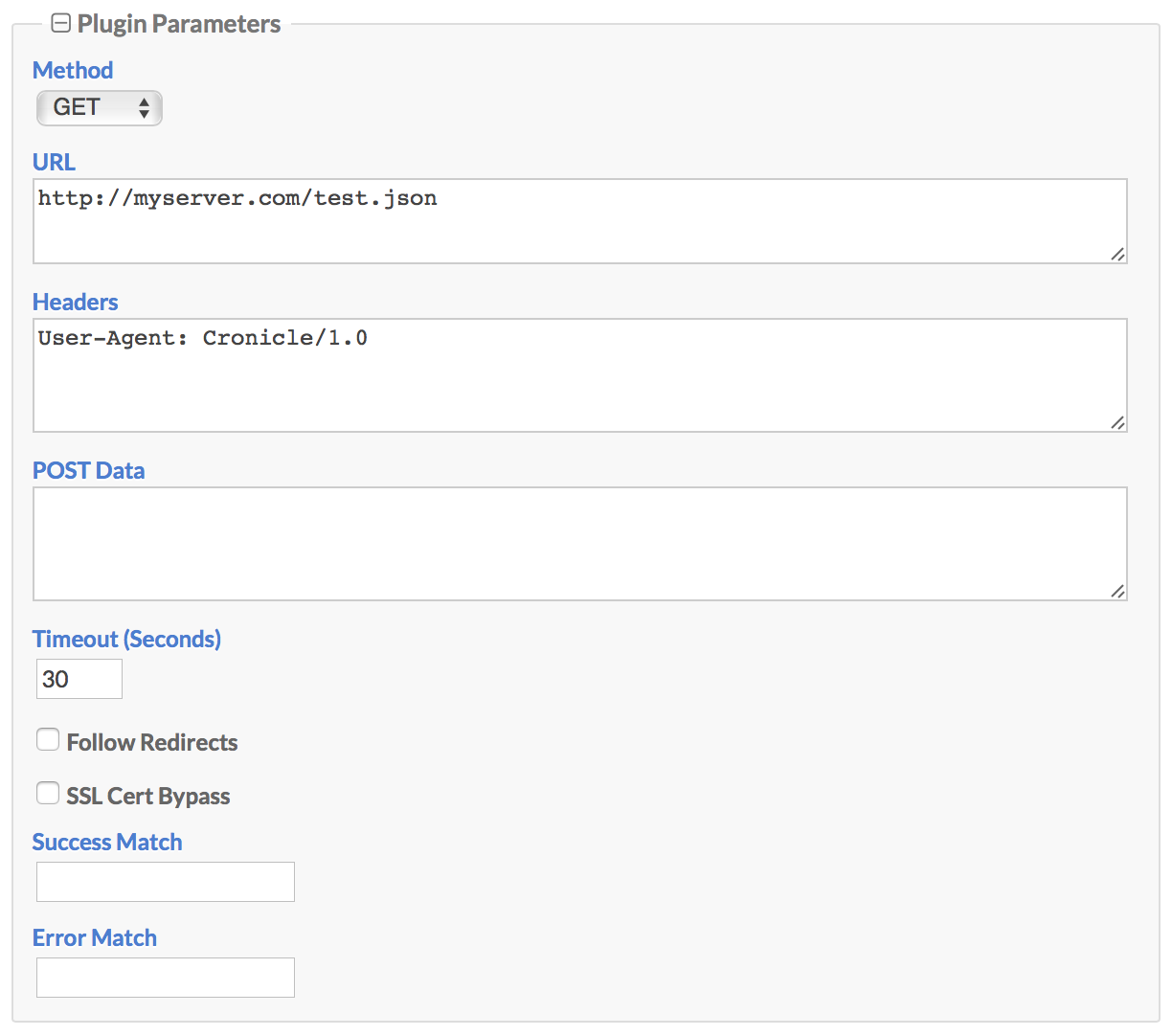

Whenever Cronicle runs an event, a "Plugin" is loaded to handle the job. This is basically a shell command which runs as its own process, reads JSON from STDIN to receive metadata about the job, and writes JSON to STDOUT to report progress and completion. Plugins can be written in virtually any language. See the Plugins section below for more details.

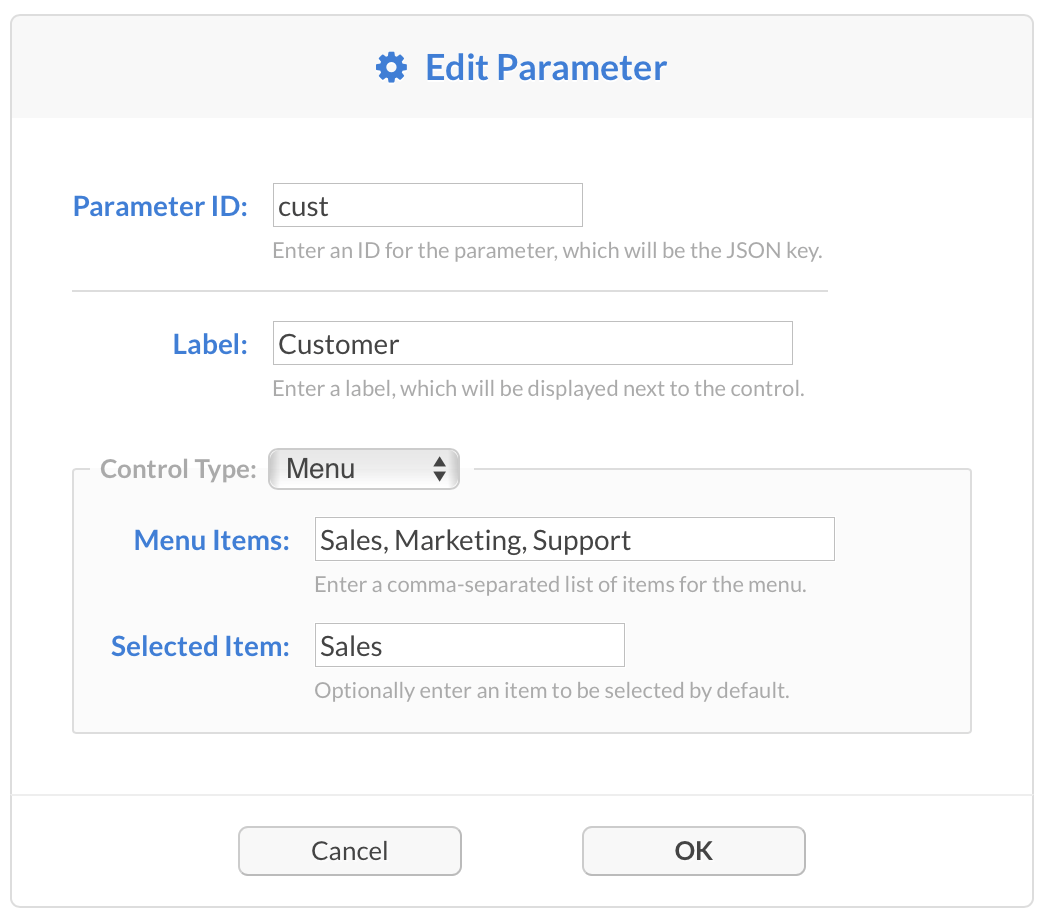

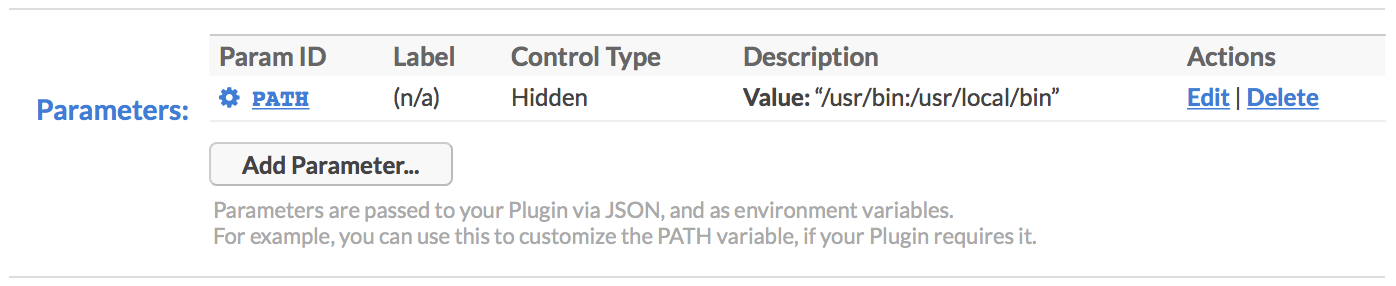

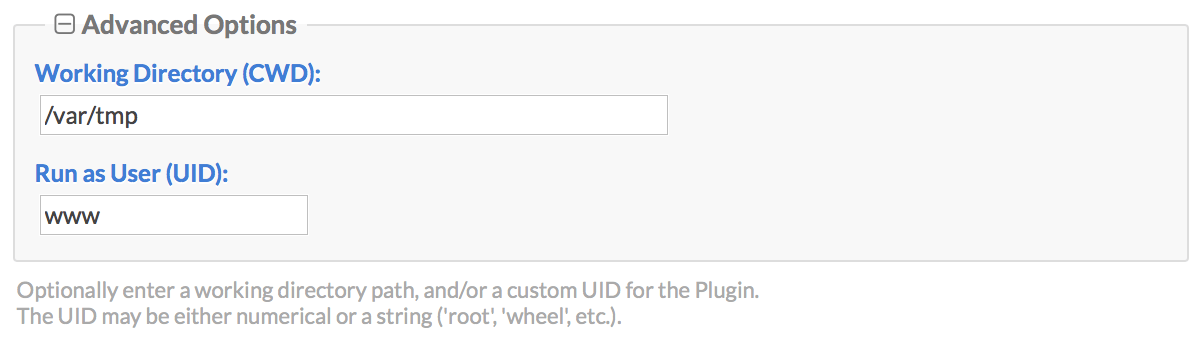

If the Plugin defines any custom parameters, they are editable per event. This feature allows the Plugin to define a set of UI elements (text fields, checkboxes, drop-down menus, etc.) which the event editor can provide values for. Then, when jobs are started, the Plugin is provided a JSON document containing all the custom keys and values set by the UI for the event.

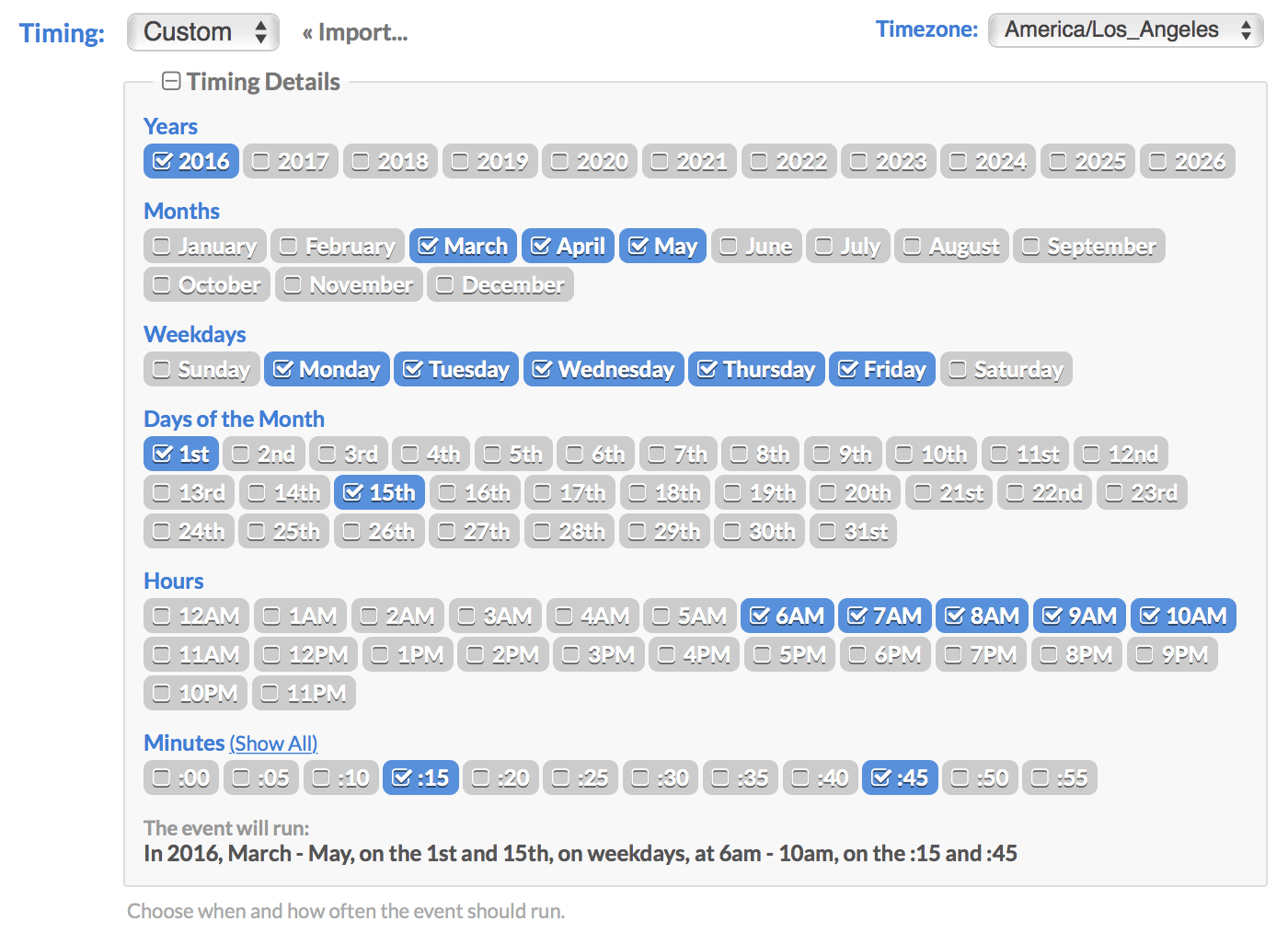

Events are scheduled to run at various dates and times using a visual multi-selector widget, as shown above. This allows you to multi-select any combination of years, months, days, weekdays, hours and/or minutes, for an event to run on. It will also repeat on a recurring basis, each time the server clock matches your selections. This is very similar to the Unix Cron format, but with a more visual user interface.

If you leave all the boxes unchecked in a particular time scale, it means the same as "all" (similar to the Cron asterisk * operator). So if you leave everything blank in all the categories and select only the ":00" minute, it means: every year, every month, every day, every weekday, and every hour on the ":00" minute. Or in other words, hourly.

If you click the "Import..." link, you can import date/time settings from a Crontab, i.e. the famous * * * * * syntax. This saves you the hassle of having to translate your existing Crontabs over to the Cronicle UI by hand.

By default, events are scheduled in your current local timezone, but you can customize this using the menu at the top-right. The menu is pre-populated with all the IANA standard timezones. Also, the timezone selection is saved with each event, so Cronicle always knows exactly when the they should all run, regardless of the server timezone.

The Cronicle scheduling system is versatile, but it can't do everything. For example, you cannot schedule an event to run at two different times on two different days, such as 2:30 PM on Monday and 8:30 AM on Tuesday. For that, you'd have to create separate events.

The Event Concurrency selector allows you to specify how many simultaneous jobs are allowed to run for the event. For example, multiple jobs may need to run if your event is scheduled to run hourly, but it takes longer than a hour to complete a job. Or, a job may be already running and someone clicks the "Run Now" link on the Schedule tab. This selector specifies the maximum allowed jobs to run concurrently for each event.

If an event cannot start a job due to the concurrency limit, an error is logged (see the Activity Log Tab below). What happens next depends on the Event Options. If the event has Run All (Catch-Up) mode enabled, then the scheduler will keep trying to run every scheduled job indefinitely. Otherwise, it will simply wait until the next scheduled run.

You can optionally specify an event timeout, which is a maximum run time for event jobs. If a job takes longer than the specified timeout period, it is aborted, and logged as a failed job. To disable the timeout and allow jobs to run indefinitely, set this field to 0.

If a job throws an internal error (meaning, it returns a non-zero code in the JSON response, or a shell command exits with a non-zero exit code), you can have Cronicle automatically retry it up to 32 times. Aborting a job (either manually or by a timeout) does not trigger a retry.

If the retries is set to a non-zero amount, a "Retry Delay" text field will appear. This allows you to have Cronicle wait a certain amount of time between retries, if you want. The idea is to reduce bashing on services that may be overloaded.

Note that the Event Timeout applies to the total run time of the job, which includes all retries. For example, if you set the timeout to 10 minutes, and the job takes 9 minutes and fails, any retries will then only have 1 minute to complete the job. So please set the timeout accordingly.

This section allows you to select options on how jobs are handled for the event:

When Run All (Catch-Up) Mode mode is enabled on an event, the scheduler will do its best to ensure that every scheduled job will run, even if they have to run late. This is useful for time-sensitive events such as generating reports. So for example, if you have an event scheduled to run hourly, but something prevents it from starting or completing (see below), the scheduler will keep trying indefinitely until each separate hourly job runs. If the event cannot run for multiple hours, the jobs will simply queue up, and the scheduler will run them all in order, as quickly as its rules allow.

If any of the following situations occur, and the event has Run All (Catch-Up) mode enabled, the scheduler will queue up and re-run all missed jobs:

- Job could not run due to concurrency limits.

- Job could not run due to the target server being unavailable.

- Job could not run due to the event category or Plugin being disabled.

- Server running the job was shut down.

- Server running the job crashed.

- Job was aborted due to exceeding a timeout limit.

- Job was aborted due to exceeding a resource limit (RAM or CPU).

The only time a Catch-Up job is not re-run is when one of the following actions occur:

- Job is manually aborted via the Web UI or API.

- Job fails due to error thrown from inside the Plugin (user code generated error).

You can see all queued jobs on the Home Tab. They will be listed in the Upcoming Events table, and have their "Countdown" column set to "Now". To jump over the queue and reset an event that has fallen behind, use the Event Time Machine feature.

When Run All (Catch-Up) mode is disabled, and a job cannot run or fails due to any of the reasons listed above, the scheduler simply logs an error, and resumes normal operations. The event will not run until the next scheduled time, if any. This is more suitable for events that are not time-sensitive, such as log rotation.

When Uninterruptible (Detached) mode is enabled on an event, jobs are spawned as standalone background processes, which are not interrupted for things like the Cronicle daemon restarting. This is designed mainly for critical operations that cannot be stopped in the middle for whatever reason.

Please use this mode with caution, and only when truly needed, as there are downsides. First of all, since the process runs detached and standalone, there are no real-time updates. Meaning, the progress bar and time remaining displays are delayed by up to a minute. Also, when your job completes, there is a delay of up to a minute before Cronicle realizes and marks the job as complete.

It is much better to design your jobs to be interrupted, if at all possible. Note that Cronicle will re-run interrupted jobs if they have Run All Mode set. So Detached Mode should only be needed in very special circumstances.

By default, when jobs cannot run due to concurrency settings, or other issues like an unavailable target server, an error is generated. That is, unless you enable the event queue. With queuing enabled, jobs that can't run immediately are queued up, and executed on a first come, first serve basis, as quickly as conditions allow.

When the queue is enabled on an event, a new "Queue Limit" section will appear in the form, allowing you to set the maximum queue length per event. If this limit is reached, no additional jobs can be queued, and an error will be generated.

You can track the progress of your event queues on the Home Tab. Queued events and counts appear in a table between the Active Jobs and Upcoming Events sections. From there you can also "flush" an event queue (i.e. delete all queued jobs), in case one grows out of control.

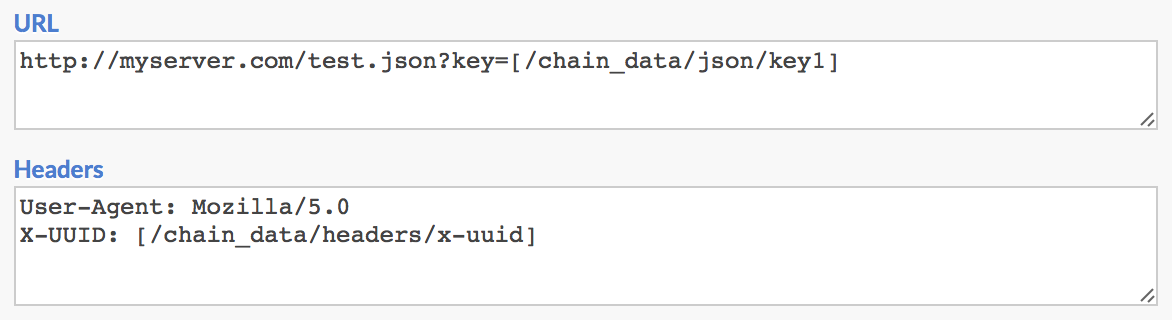

Chain Reaction mode allows you to select an event which will be launched automatically each time the current event completes a job. You are essentially "chaining" two events together, so one always runs at the completion of the other. This chain can be any number of events long, and the events can all run on different servers.

You can optionally select different events to run if the current job succeeds or fails. For example, you may have a special error handling / notification event, which needs to run upon specific event failures.

You can have more control over this process by using the JSON API in your Plugins. See Chain Reaction Control below for details.

When editing an existing event that has Run All (Catch-Up) mode enabled, the Event Time Machine will appear. This is a way to reset the internal "clock" for an event, allowing you to re-run past jobs, or skip over a queue of stuck jobs.

For each event in the schedule, Cronicle keeps an internal clock called a "cursor". If you imagine time running along a straight line, the event cursors are points along that line. When the primary server ticks a new minute, it shifts all the event cursors forward up to the current minute, running any scheduled events along the way.

So for example, if you needed to re-run a daily 4 AM report event, you can just edit the cursor clock and set it back to 3:59 AM. The cursor will catch up to the current time as quickly as it can, stopping only to run any scheduled events along the way. You can also use this feature to "jump" over a queue, if jobs have stacked up for an event. Just set the cursor clock to the current time, and the scheduler will resume jobs from that point onward.

The event clock for the Time Machine is displayed and interpreted in the event's currently selected timezone.

Cronicle can optionally send out an e-mail notification to a custom list of recipients for each job's completion (for multiple, separate addresses by commas). You can also specify different e-mail addresses for when job succeeds, vs. when it fails. The e-mails will contain a plethora of information about the event, the job, and the error if applicable. Example email contents:

To: [email protected]

From: [email protected]

Subject: Cronicle Job Failed: Rebuild Indexes

Date/Time: 2015/10/24 19:47:50 (GMT-7)

Event Title: Rebuild Indexes

Category: Database

Server Target: db01.prod

Plugin: DB Indexer

Job ID: jig5wyx9801

Hostname: db01.prod

PID: 4796

Elapsed Time: 1 minute, 31 seconds

Performance Metrics: scale=1&total=30.333&db_query=1.699&db_connect=1.941&log_read=2.931&gzip_data=3.773

Memory Usage: 274.5 MB Avg, 275.1 MB Peak

CPU Usage: 31.85% Avg, 36.7% Peak

Error Code: 999

Error Description:

Failed to write to file: /backup/db/schema.sql: Out of disk space

Job Details:

http://local.syncronic.com:3012/#JobDetails?id=jig5wyx9801

Job Debug Log (18.2 K):

http://local.syncronic.com:3012/api/app/get_job_log?id=jig5wyx9801

Edit Event:

http://local.syncronic.com:3012/#Schedule?sub=edit_event&id=3c182051

Event Notes:

This event handles reindexing our primary databases nightly.

Contact Daniel in Ops for details.

Regards,

The Cronicle Team

You have control over much of the content of these e-mails. The Error Code and Description are entirely generated by your own Plugins, and can be as custom and verbose as you want. The Performance Metrics are also generated by your Plugins (if applicable), and the Event Notes are taken straight from the UI for the event (see Event Notes below). Finally, the entire e-mail template can be customized to including additional information or to fit your company's brand. HTML formatted e-mails are supported as well.

See the Email Configuration section for more details on customization.

Another optional notification method for events is a "web hook". This means Cronicle will send an HTTP POST to a custom URL that you specify, both at the start and the end of each job, and include full details in JSON format. Your own API endpoint will receive the JSON POST from Cronicle, and then your code can fire off its own custom notification.

You can determine if the request represents a start or the end of a job by looking at the action property. It will be set to job_start or job_complete respectively. Here is a list of all the JSON properties that will be included in the web hook, and what they mean:

| JSON Property | Description |

|---|---|

action |

Specifies whether the web hook signifies the start (job_start) or end (job_complete) of a job. |

base_app_url |

The base_app_url configuration property. |

category |

The Category ID to which the event is assigned. |

category_title |

The title of the Category to which the event is assigned. |

code |

The response code as specified by your Plugin (only applicable for job_complete hooks). |

cpu |

An object representing the min, max, average and latest CPU usage for the job (only applicable for job_complete hooks). |

description |

A custom text string populated by your Plugin, typically contains the error message on failure. |

edit_event_url |

A fully-qualified URL to edit the event in the Cronicle UI. |

elapsed |

The total elapsed time for the job, in seconds (only applicable for job_complete hooks). |

event |

The ID of the event which spawned the job. |

event_title |

The title of the event which spawned the job. |

hostname |

The hostname of the server which ran (or is about to run) the event. |

id |

An auto-assigned unique ID for the job, which can be used in API calls to query for status. |

job_details_url |

A fully-qualified URL to view the job details in the Cronicle UI. |

log_file_size |

The size of the job's log file in bytes (only applicable for job_complete hooks). |

mem |

An object representing the min, max, average and latest memory usage for the job (only applicable for job_complete hooks). |

nice_target |

Will be set to the title of the target server group, or exact server hostname, depending on how the event is configured. |

params |

An object containing all the UI selections for the Plugin custom parameters, from the event. |

perf |

An object or string containing performance metrics, as reported by your Plugin (only applicable for job_complete hooks). |

pid |

The Process ID (PID) of the main job process which ran your Plugin code (only applicable for job_complete hooks). |

plugin |

The ID of the Plugin assigned to the event. |

plugin_title |

The title of the Plugin assigned to the event. |

source |

A string describing who or what started the job (user or API). Will be blank if launched normally by the scheduler. |

text |

A simple text string describing the action that took place. Useful for Slack Webhook Integrations. |

time_end |

The Epoch timestamp of when the job ended (only applicable for job_complete hooks). |

time_start |

The Epoch timestamp of when the job started. |

Here is an example web hook JSON record (job_complete version shown):

{

"action": "job_complete",

"base_app_url": "http://localhost:3012",

"category": "general",

"category_title": "General",

"code": 0,

"cpu": {

"min": 23.4,

"max": 23.4,

"total": 23.4,

"count": 1,

"current": 23.4

},

"description": "Success!",

"edit_event_url": "http://localhost:3012/#Schedule?sub=edit_event&id=3c182051",

"elapsed": 90.414,

"event": "3c182051",

"event_title": "Test Event 2",

"hostname": "joeretina.local",

"id": "jihuyalli01",

"job_details_url": "http://localhost:3012/#JobDetails?id=jihuyalli01",

"log_file_size": 25119,

"mem": {

"min": 190459904,

"max": 190459904,

"total": 190459904,

"count": 1,

"current": 190459904

},

"nice_target": "joeretina.local",

"params": {

"db_host": "idb01.mycompany.com",

"verbose": 1,

"cust": "Marketing"

},

"perf": "scale=1&total=90.103&db_query=0.237&db_connect=6.888&log_read=9.781&gzip_data=12.305&http_post=14.867",

"pid": 72589,

"plugin": "test",

"plugin_title": "Test Plugin",

"source": "Manual (admin)",

"text": "Job completed successfully on joeretina.local: Test Event 2 http://localhost:3012/#JobDetails?id=jihuyalli01",

"time_end": 1449431930.628,

"time_start": 1449431840.214

}In addition to job_start and job_complete, there is one other special hook action that may be sent, and that is job_launch_failure. This happens if a scheduled event completely fails to start a job, due to an unrecoverable error (such as an unavailable target server or group). In this case the code property will be non-zero, and the description property will contain a summary of the error.

Only a small subset of the properties shown above will be included with a job_launch_failure, as a job object was never successfully created, so there will be no hostname, pid, elapsed, log_file_size, etc.

To include custom HTTP request headers with your web hook, append them onto the end of the URL using this format: [header: My-Header: My-Value]. Make sure to include a space before the opening bracket. Example URL:

https://myserver.com/api/chat.postMessage [header: My-Header: My-Value]

Cronicle can automatically limit the server resource consumption of your jobs, by monitoring their CPU, memory and/or log file size, and aborting them if your limits are exceeded. You can also specify "sustain" times for CPU and memory, so no action is taken until the limits are exceeded for a certain amount of time.

CPU and RAM usage are measured every 10 seconds, by looking at the process spawned for the job, and any child processes that may have also been spawned by your code. So if you fork your own child subprocess, or shell out to a command-line utility, all the memory is totaled up, and compared against the resource limits for the job.

Event notes are for your own internal use. They are displayed to users when editing an event, and in all e-mails regarding successful or failed jobs. For example, you could use this to describe the event to members of your team who may not be familiar, and possibly provide a link to other documentation. There is no character limit, so knock yourself out.

To run an event immediately, click the Run Now button. This will run the current event regardless of its timing settings, and whether the event is enabled or disabled in the schedule. This is simply an on-demand job which is created and executed right away.

If you need to customize the internal clock for the job, hold Shift which clicking the Run Now button. This will bring up a dialog allowing you to set the date and time which the Plugin will see as the "current time", for your on-demand job only. It will not affect any other jobs or the event itself. This is useful for re-running past jobs with a Plugin that honors the now timestamp in the job data.

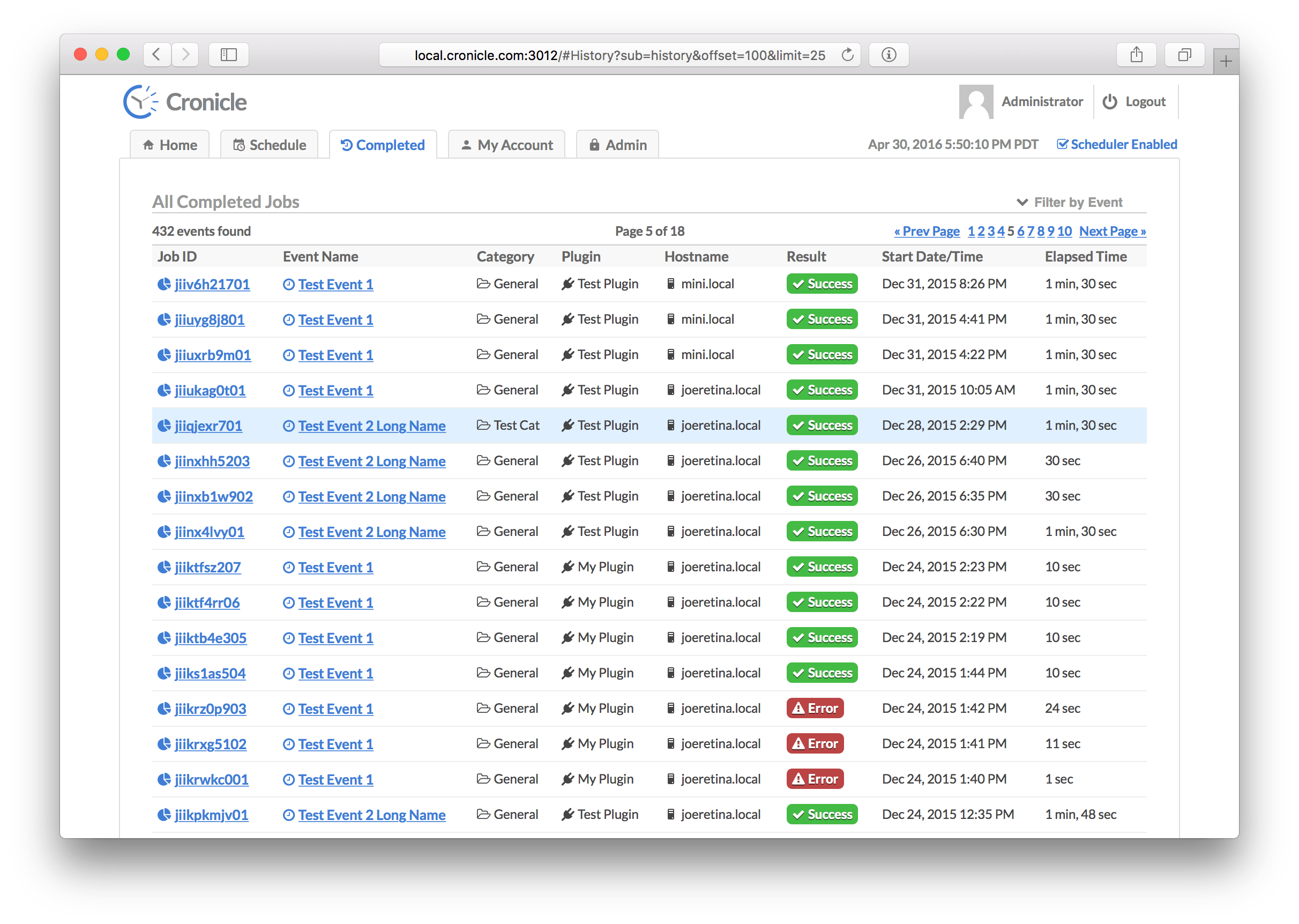

This tab shows you all recently completed jobs, for all events, and whether they succeeded or failed. Cronicle will keep up to list_row_max job completions in storage (default is 10,000). The jobs are sorted by completion date/time, with the latest at the top. Use the pagination controls on the top right to jump further back in time. The table columns are:

| Column | Description |

|---|---|

| Job ID | A unique ID assigned to the job. Click this to see details (see Job Details Tab below). |

| Event Name | The name of the scheduled event for the job. Click this to see the event history (see Event History Tab below). |

| Category | The category to which the event is assigned. |

| Plugin | The Plugin which was used to run the job. |

| Hostname | The hostname of the server which ran the job. |

| Result | This shows whether the job completed successfully, or returned an error. |

| Start Date/Time | The date/time when the job first started. |

| Elapsed Time | The total elapsed time of the job (including any retries). |

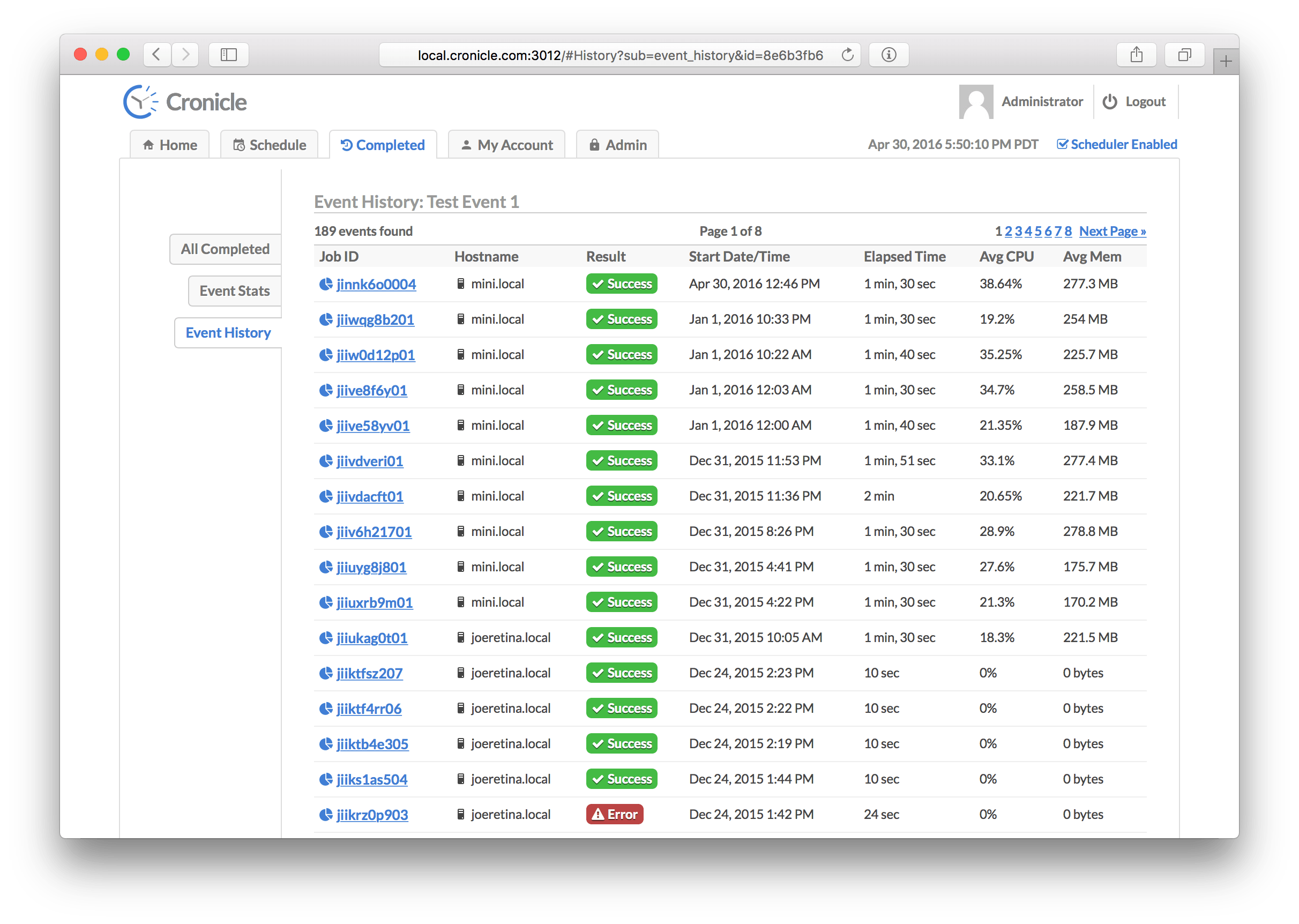

This tab shows you all recently completed jobs for one specific event. Cronicle will keep up to list_row_max job completions in storage (default is 10,000). The jobs are sorted by completion date/time, with the latest at the top. Use the pagination controls on the top right to jump further back in time. The table columns are:

| Column | Description |

|---|---|

| Job ID | A unique ID assigned to the job. Click this to see details (see Job Details Tab below). |

| Hostname | The hostname of the server which ran the job. |

| Result | This shows whether the job completed successfully, or returned an error. |

| Start Date/Time | The date/time when the job first started. |

| Elapsed Time | The total elapsed time of the job (including any retries). |

| Avg CPU | The average CPU percentage used by the job process (including any subprocesses), where 100% equals one CPU core. |

| Avg Mem | The average memory used by the job process (including any subprocesses). |

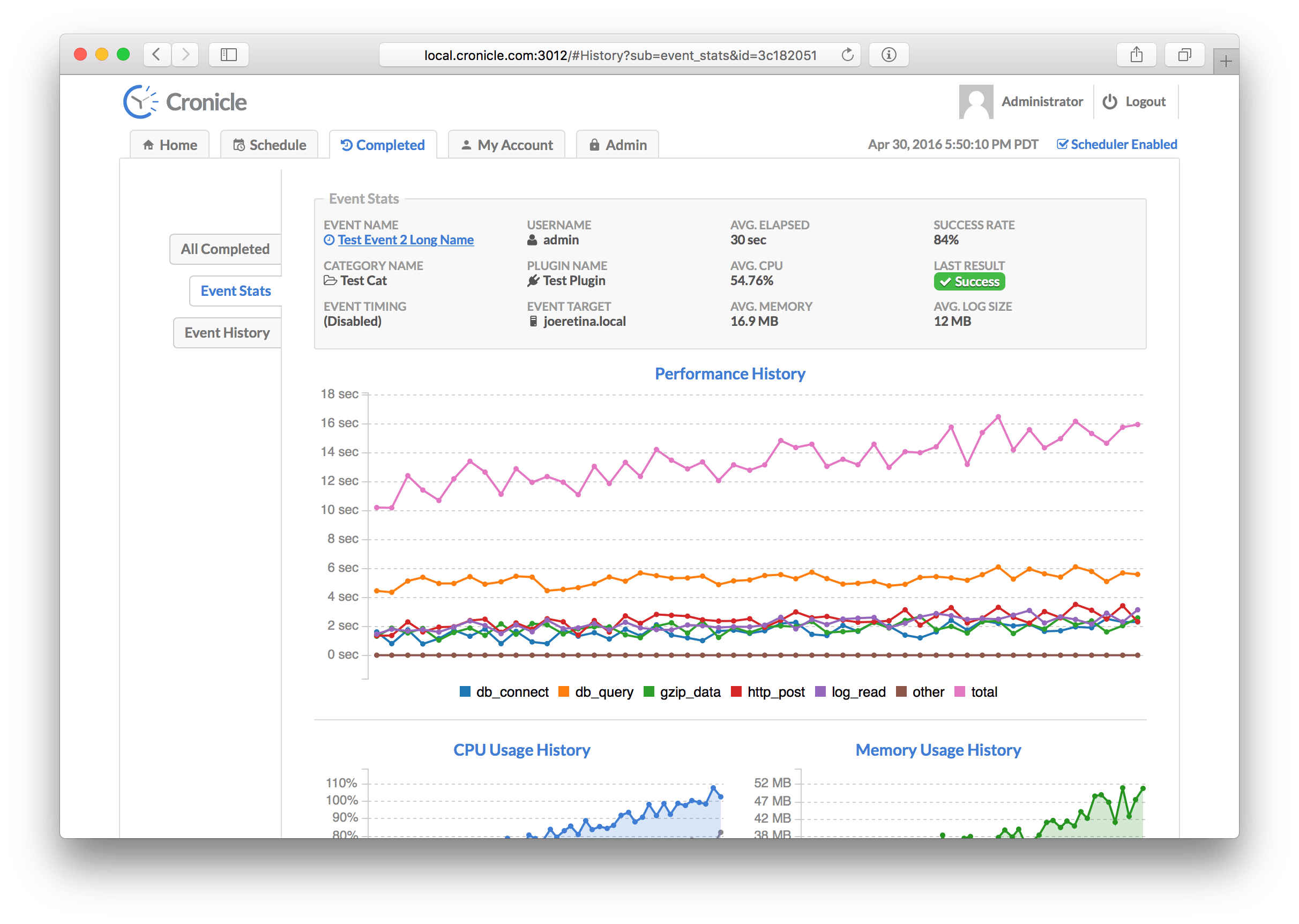

This tab contains statistics about a specific event, including basic information and performance graphs. The data is calculated from the last 50 completed jobs. Here is a list of all the stats that are displayed at the top of the screen:

| Statistic | Description |

|---|---|

| Event Name | The name of the event being displayed. |

| Category Name | The category to which the event is assigned. |

| Event Timing | The timing settings for the event (daily, hourly, etc.). |

| Username | The username of the user who first created the event. |

| Plugin Name | The Plugin selected to run jobs for the event. |

| Event Target | The server group or individual server selected to run jobs for the event. |

| Avg. Elapsed | The average elapsed time of jobs for the event. |

| Avg. CPU | The average CPU used by jobs for the event. |

| Avg. Memory | The average RAM used by jobs for the event. |

| Success Rate | The success percentage rate of jobs for the event. |

| Last Result | Shows the result of the latest job completion (success or fail). |

| Avg. Log Size | The average log file size of jobs for the event. |

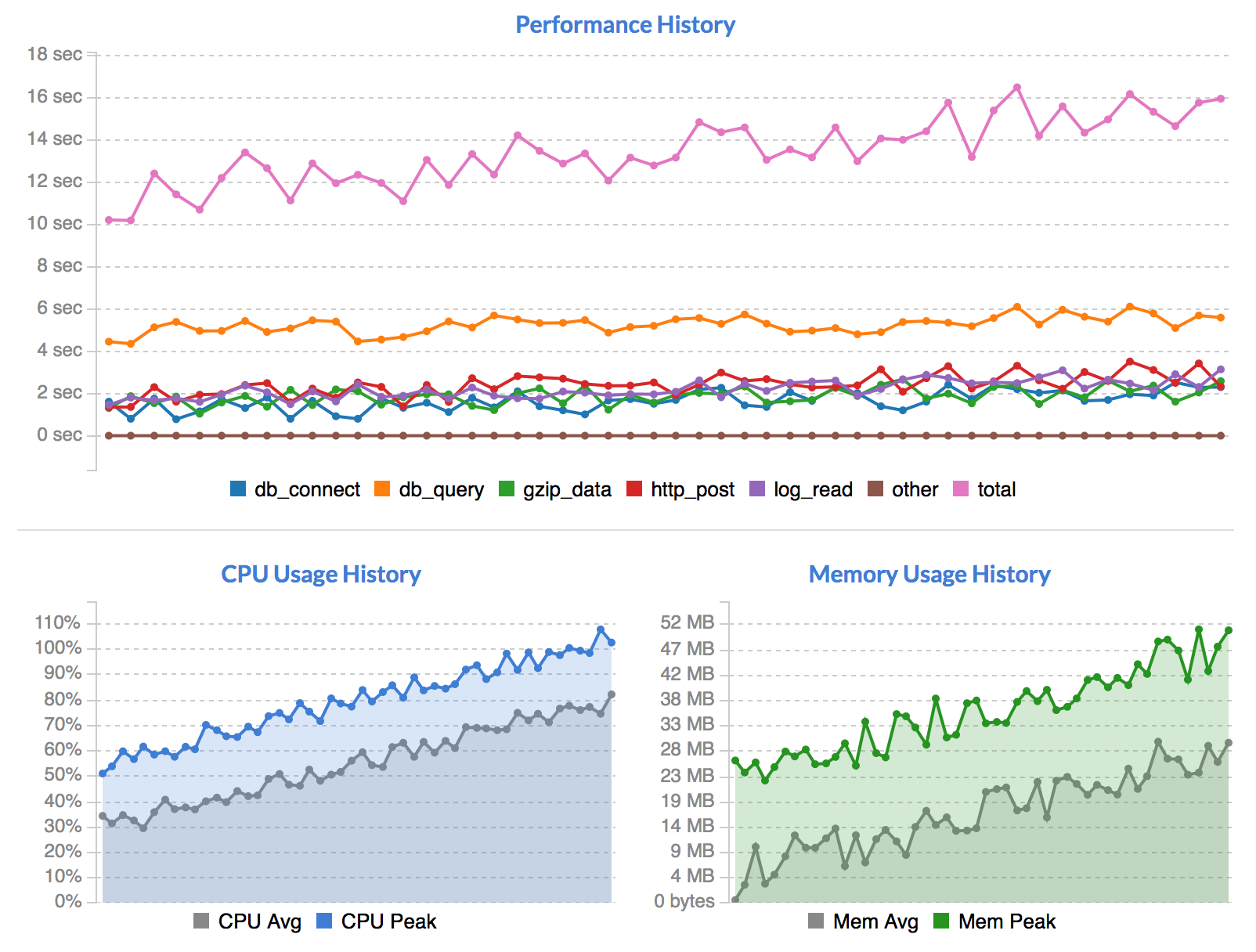

Below the stats are a number of graphs:

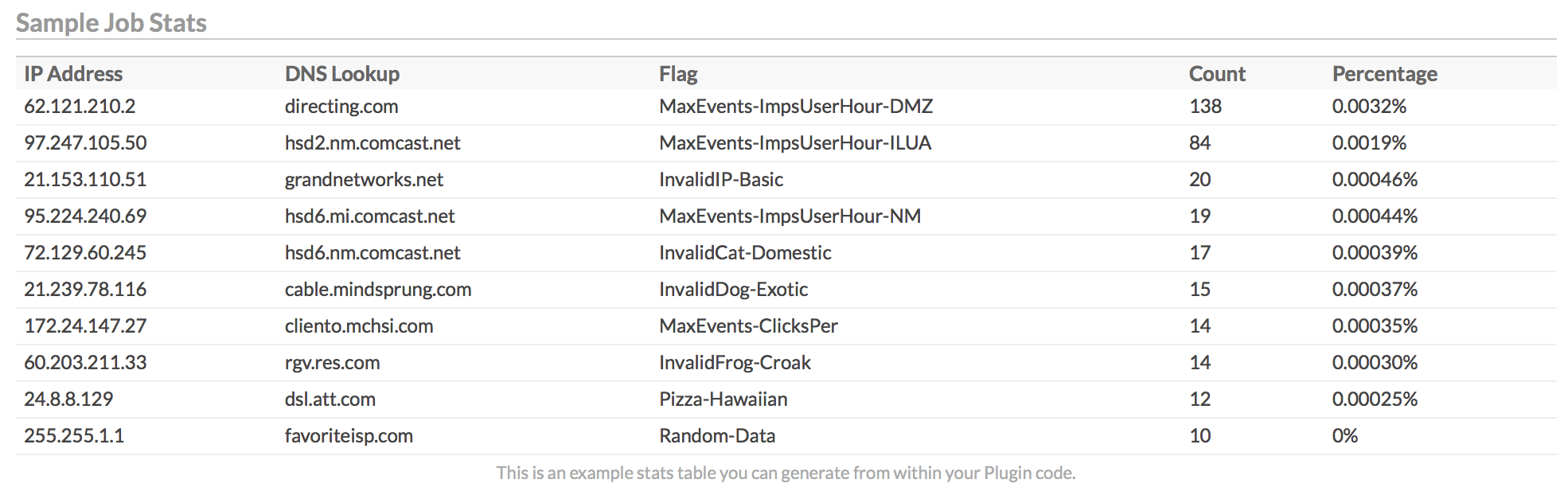

The first graph shows performance of your Plugin's metrics over time. The different categories shown are entirely driven by your custom code. You can choose to provide performance metrics or not, and add as many custom categories as you like. For details, see the Writing Plugins and Performance Metrics sections below.

Below the performance history graph are the CPU Usage History and Memory Usage History graphs. These display your event's server resource usage over time. Each dot on the graph is a particular job run, and the history goes back for 50 runs, if available.

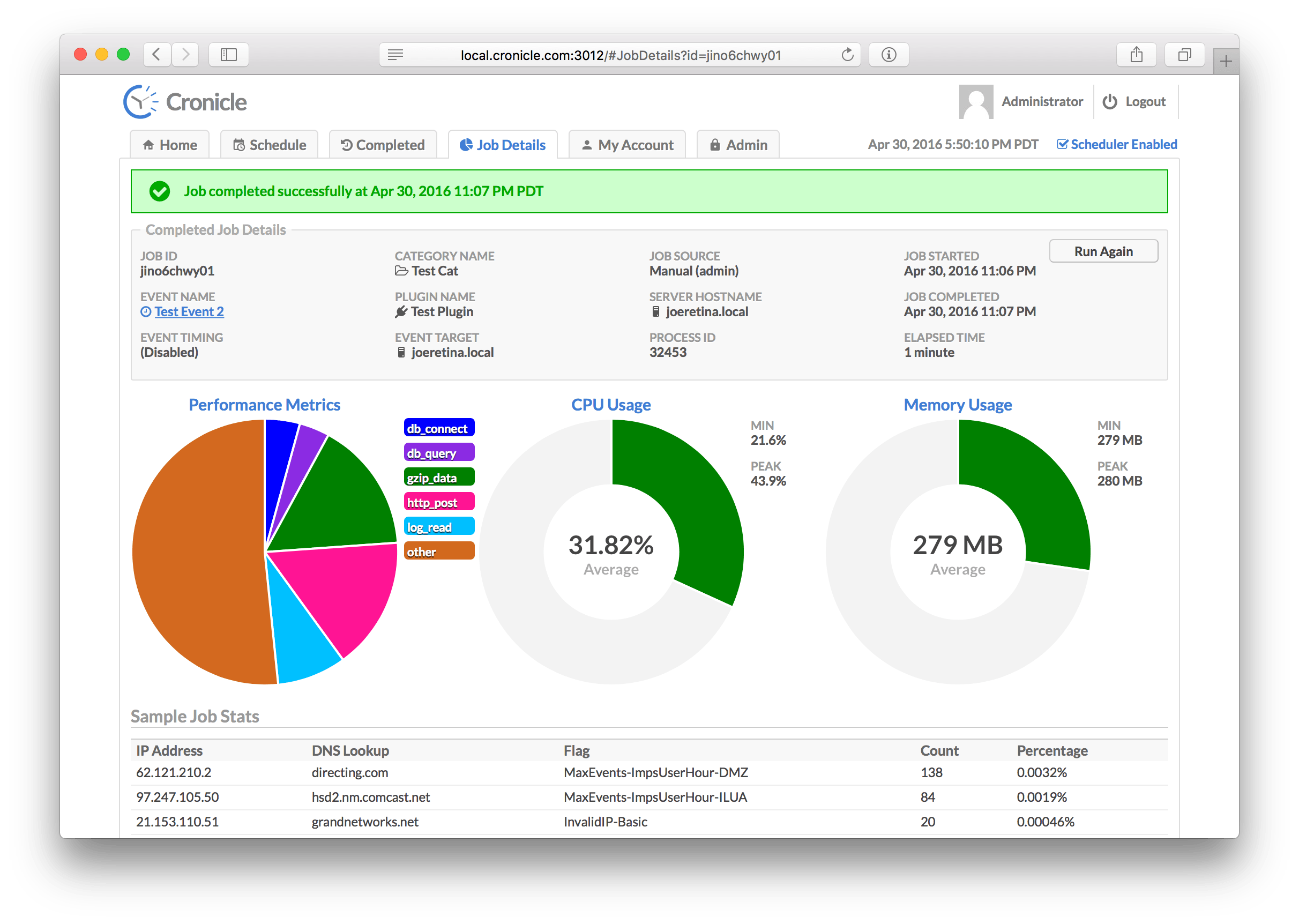

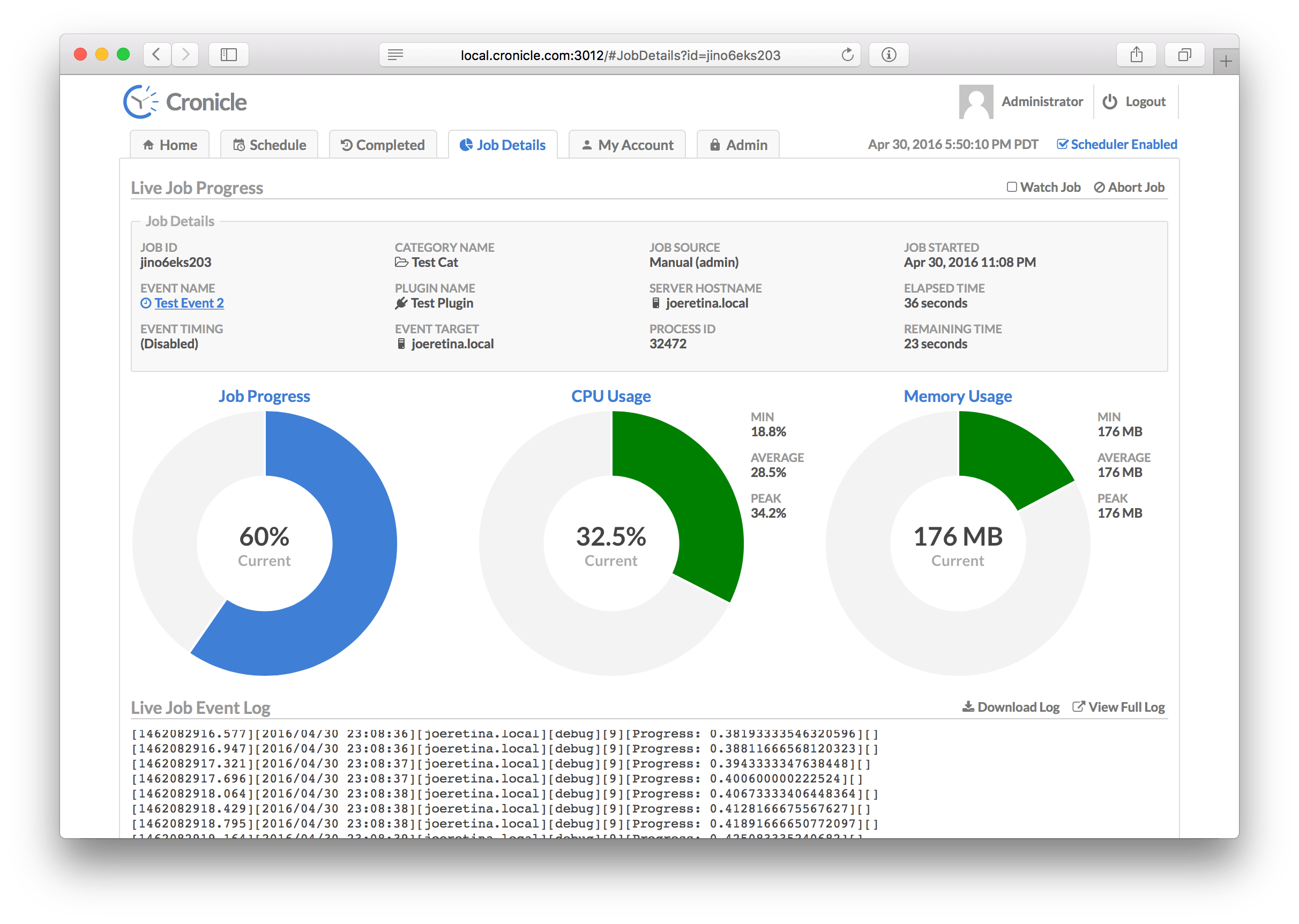

The Job Details Tab is used to view jobs currently in progress, and to see details about completed jobs. The display is slightly different for each case. For jobs in progress, you'll see some statistics about the job which are updated live (see below), and three large donut charts: The current job progress (if provided by the Plugin), and the current CPU and memory usage of the job. Below the donuts you'll find the live job log file, updated in real-time. The stats consist of:

| Statistic | Description |

|---|---|

| Job ID | A unique ID assigned to the job. |

| Event Name | The name of the event being displayed. |

| Event Timing | The timing settings for the event (daily, hourly, etc.). |

| Category Name | The category to which the event is assigned. |

| Plugin Name | The Plugin selected to run jobs for the event. |

| Event Target | The server group or individual server selected to run jobs for the event. |

| Job Source | The source of the job (who started it, the scheduler or manually by hand). |

| Server Hostname | The hostname of the server which is running the job. |

| Process ID | The ID of the process currently running the job. |

| Job Started | The date/time of when the job first started. |

| Elapsed Time | The current elapsed time of the job. |

| Remaining Time | The estimated remaining time (if available). |

The CPU donut chart visually indicates how close your job is to using a full CPU core. If it uses more than that (i.e. multiple threads or sub-processes), the chart simply shows "full" (solid color). The Memory donut visually indicates your job's memory vs. the "maximum" configured resource limit, or 1 GB if resource limits are not in effect for the job. The colors are green if the donut is under 50% filled, yellow if between 50% and 75%, and red if over 75%.

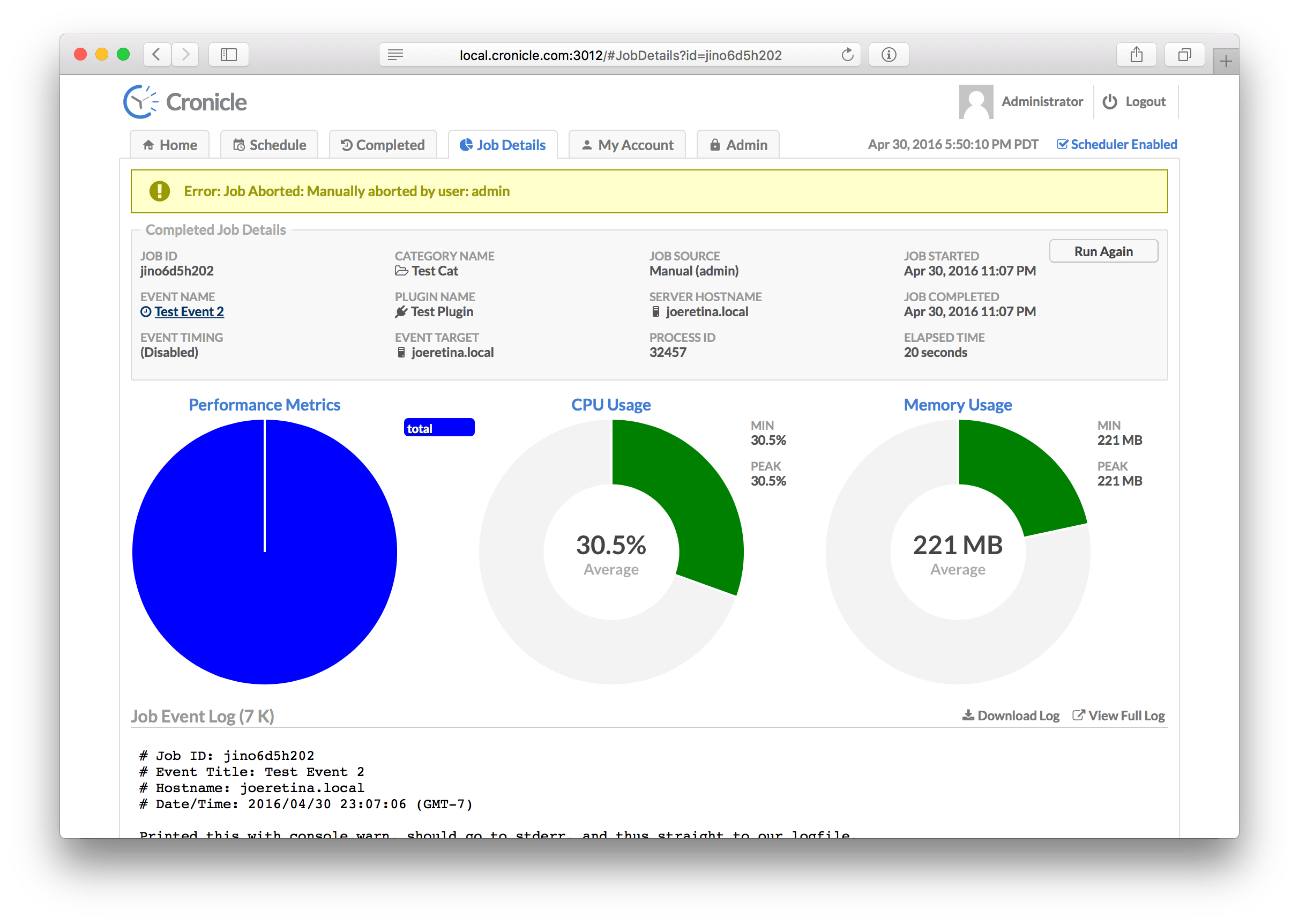

When the job completes, or when viewing details about a previously completed job, the display looks slightly different:

The only difference in the statistics table is that the right-hand column contains the Job Completed date/time instead of the remaining time. Below that, the left-hand donut graph now shows performance metrics from your Plugin (if provided). You can choose to provide performance metrics or not, and add as many custom categories as you like. For details, see the Writing Plugins and Performance Metrics sections below.

The CPU and Memory donut charts now show the average values over the course of your job run, rather than the "current" values while the job is still running. The same visual maximums and color rules apply (see above).

Here is how the Job Details tab looks when a job fails:

The error message is displayed in a banner along the top of the screen. The banner will be yellow if the job was aborted, or red if an error was returned from your Plugin.

In this case the job was aborted, so the Plugin had no chance to report performance metrics, hence the left-hand pie chart is blank.

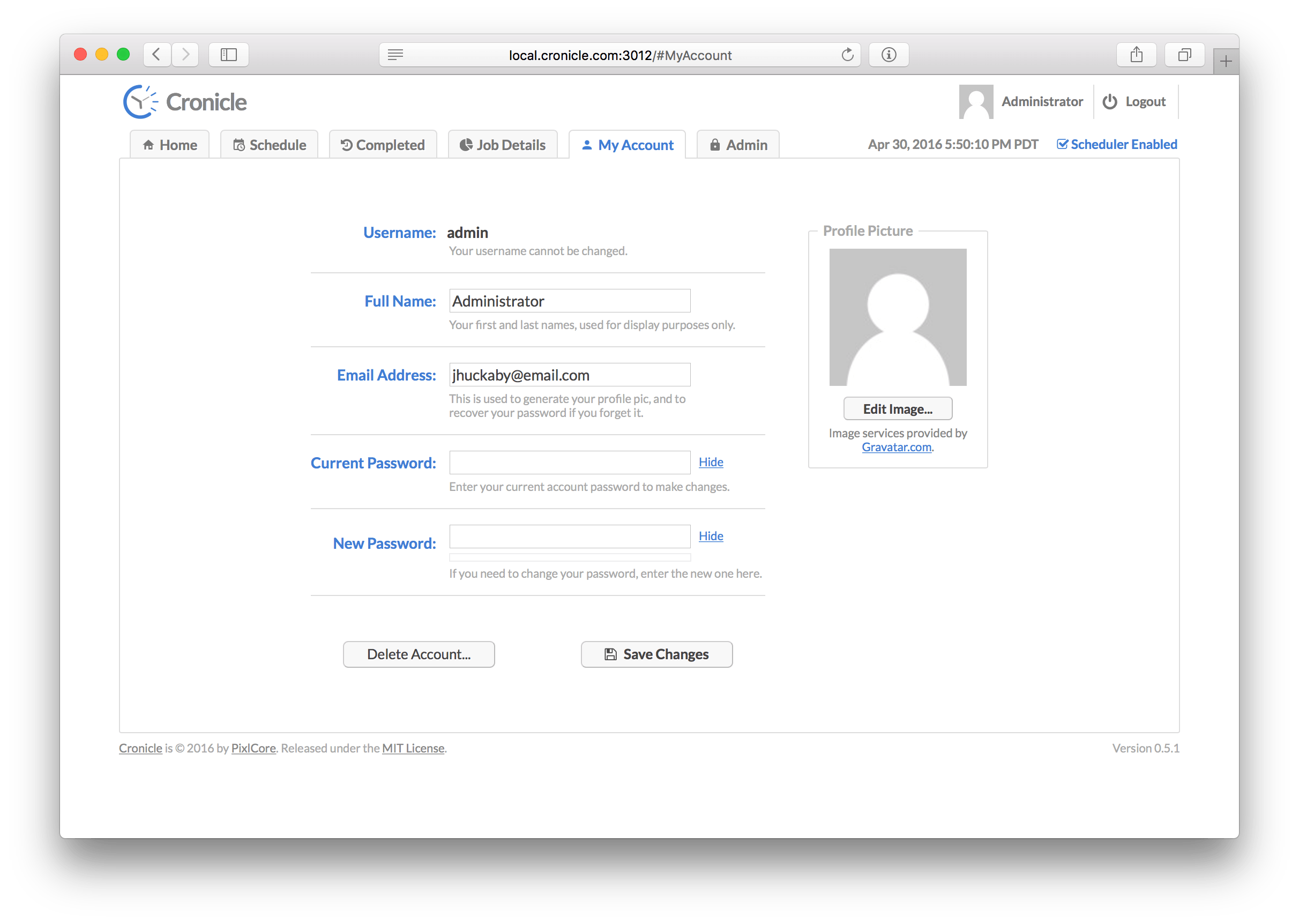

On this tab you can edit your own account profile, i.e. change your name, e-mail address, and/or password. You also have the option of completely deleting your account via the "Delete Account" button. For security purposes, in order to save any changes you must enter your existing account password.

Your user avatar image is automatically pulled from the free Gravatar.com service, using your e-mail address. To customize your image, please login or create a Gravatar account using the same e-mail address as the one in your Cronicle account.

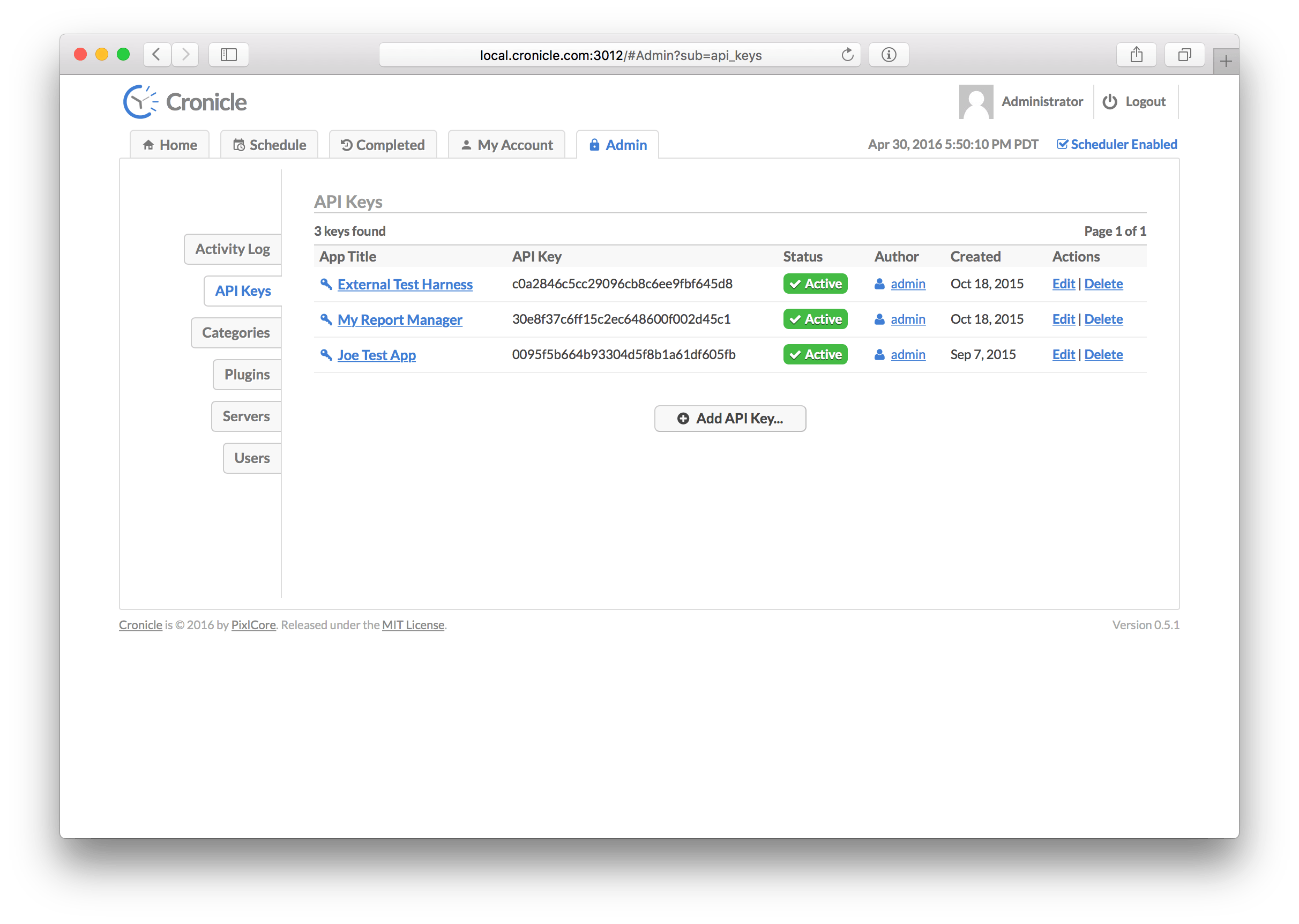

This tab is only visible and accessible to administrator level users. It allows you to view the global activity log, manage API keys, categories, Plugins, servers and users. To access all the various functions, use the tabs along the left side of the page.

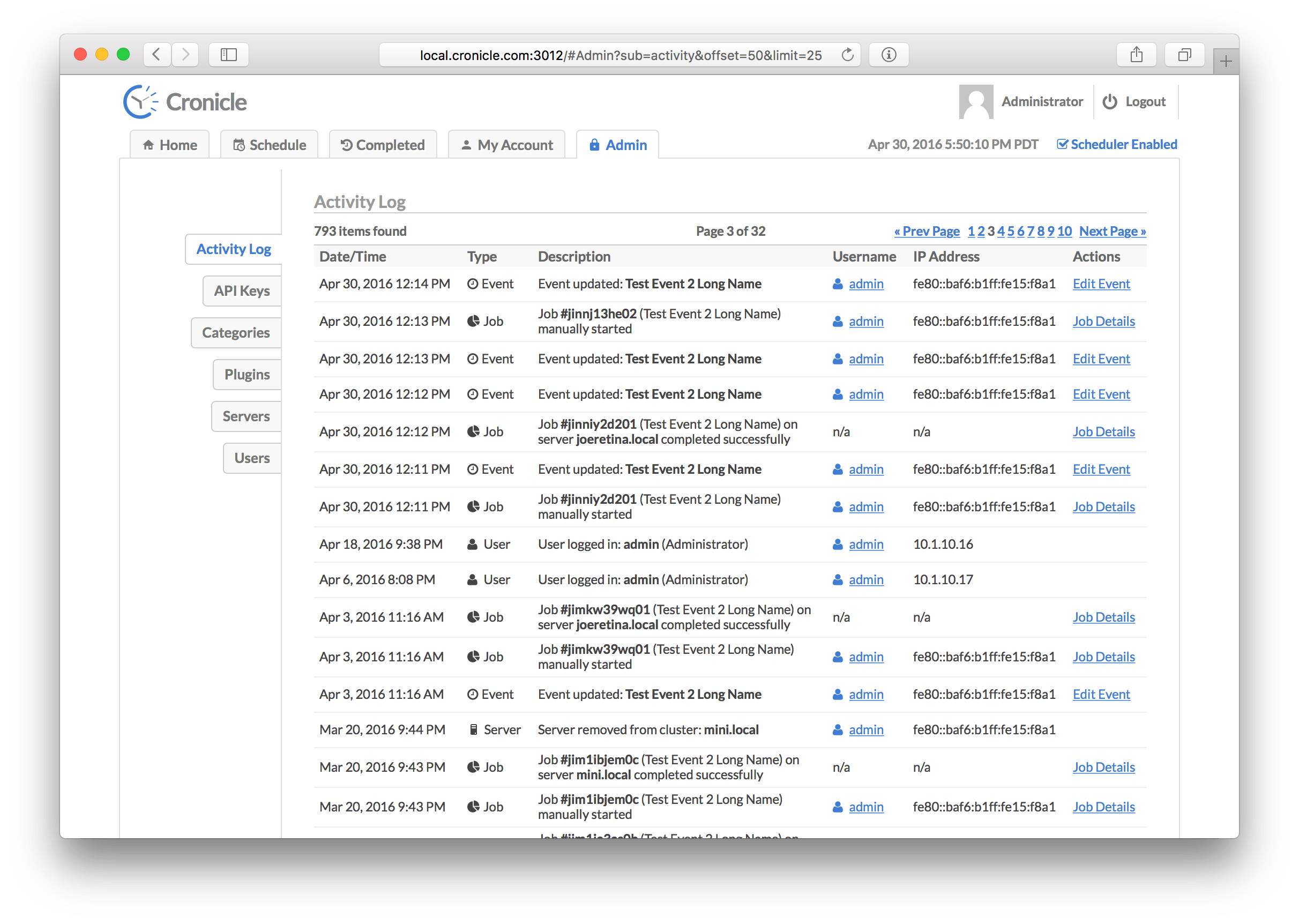

All activity in Cronicle is logged, and viewable on the Activity Log tab. The log is presented as a paginated table, sorted by descending date/time (newest activity at the top). Pagination controls are located in the top-right corner. The table columns are as follows:

| Column | Description |

|---|---|

| Date/Time | The date and time of the activity (adjusted for your local timezone). |

| Type | The type of activity (error, event, category, server, etc.). |

| Description | A description of the activity (varies based on the type). |

| Username | The username or API Key associated with the activity, if applicable. |

| IP Address | The IP address associated with the activity, if applicable. |

| Actions | A set of actions to take on the activity (usually links to more info). |

There are several different types of activity that can appear in the activity log:

| Activity Type | Description |

|---|---|

| Error | Error messages, such as failing to send e-mail or server clocks out of sync. |

| Warning | Warning messages, such as a failure to launch a scheduled event. |

| API Key | API Key related activity, such as a user creating, modifying or deleting keys. |

| Category | Category related activity, such as a user creating, modifying or deleting categories. |

| Event | Event related activity, such as a user creating, modifying or deleting events. |