| Library | Speed | Files | LOC | Dependencies | Developers | WebSockets | Multi-Threaded Web Scraper Built-in |

|---|---|---|---|---|---|---|---|

| PyWGET | 152.39 |

1 | 338 | Wget | >17 | ❎ | ❎ |

| Requests | 15.58 |

>20 | 2558 | >=7 | >527 | ❎ | ❎ |

| Requests (cached object) | 5.50 |

>20 | 2558 | >=7 | >527 | ❎ | ❎ |

| Urllib | 4.00 |

??? | 1200 | 0 (std lib) | ??? | ❎ | ❎ |

| Urllib3 | 3.55 |

>40 | 5242 | 0 (No SSL), >=5 (SSL) | >188 | ❎ | ❎ |

| PyCurl | 0.75 |

>15 | 5932 | Curl, LibCurl | >50 | ❎ | ❎ |

| PyCurl (no SSL) | 0.68 |

>15 | 5932 | Curl, LibCurl | >50 | ❎ | ❎ |

| Faster_than_requests | 0.40 |

1 | 999 | 0 | 1 | ✔️ | ✔️ 7, One-Liner |

- Lines Of Code counted using CLOC.

- Direct dependencies of the package when ready to run.

- Benchmarks run on Docker from Dockerfile on this repo.

- Developers counted from the Contributors list of Git.

- Speed is IRL time to complete 10000 HTTP local requests.

- Stats as of year 2020.

- x86_64 64Bit AMD, SSD, Arch Linux.

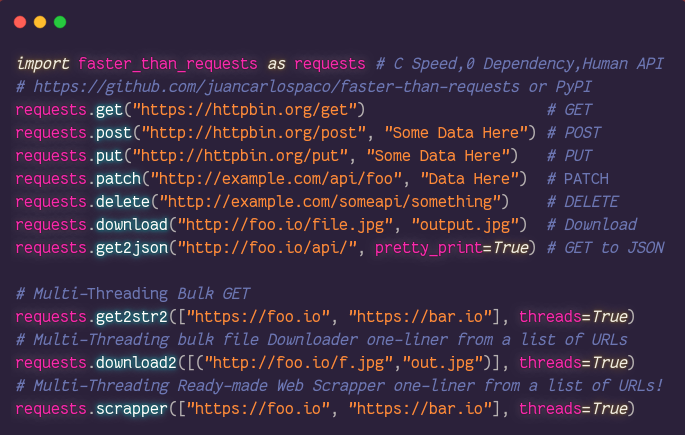

import faster_than_requests as requests

requests.get("http://httpbin.org/get") # GET

requests.post("http://httpbin.org/post", "Some Data Here") # POST

requests.download("http://example.com/foo.jpg", "out.jpg") # Download a file

requests.scraper(["http://foo.io", "http://bar.io"], threads=True) # Multi-Threaded Web Scraper

requests.scraper5(["http://foo.io"], sqlite_file_path="database.db") # URL-to-SQLite Web Scraper

requests.scraper6(["http://python.org"], ["(www|http:|https:)+[^\s]+[\w]"]) # Regex-powered Web Scraper

requests.scraper7("http://python.org", "body > div.someclass a#someid"]) # CSS Selector Web Scraper

requests.websocket_send("ws://echo.websocket.org", "data here") # WebSockets Binary/TextDescription: Takes an URL string, makes an HTTP GET and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://dev.to.user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.get("http://example.com")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

See Also: get2str() and get2str2()

Description: Takes an URL string, makes an HTTP POST and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://dev.to.bodythe Body data, string type, required, can be empty string. To Post Files use this too.multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.post("http://httpbin.org/post", "Some Data Here")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

Description: Takes an URL string, makes an HTTP PUT and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://nim-lang.org.bodythe Body data, string type, required, can be empty string.user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.put("http://httpbin.org/post", "Some Data Here")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

Description: Takes an URL string, makes an HTTP DELETE and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://nim-lang.org.user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.delete("http://example.com/api/something")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

Description: Takes an URL string, makes an HTTP PATCH and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://archlinux.org.bodythe Body data, string type, required, can be empty string.user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.patch("http://example.com", "My Body Data Here")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

Description: Takes an URL string, makes an HTTP HEAD and returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://nim-lang.org.user_agentUser Agent, string type, optional, should not be empty string.max_redirectsMaximum Redirects, int type, optional, defaults to9, example5, example1.proxy_urlProxy URL, string type, optional, if is""then NO Proxy is used, defaults to"", example172.15.256.1:666.proxy_authProxy Auth, string type, optional, ifproxy_urlis""then is ignored, defaults to"".timeoutTimeout, int type, optional, Milliseconds precision, defaults to-1, example9999, example666.http_headersHTTP Headers, List of Tuples type, optional, example[("key", "value")], example[("DNT", "1")].

Examples:

import faster_than_requests as requests

requests.head("http://example.com/api/something")Returns:

Response, list type, values of the list are string type,

values of the list can be empty string, the lenght of the list is always 7 items,

the values are like [body, type, status, version, url, length, headers],

you can use to_json() to get JSON or to_dict() to get a dict or to_tuples() to get a tuples.

Description: Convert the response to dict.

Arguments:

ftr_responseResponse from any of the functions that return a response.

Returns: Response, dict type.

Description: Convert the response to Pretty-Printed JSON.

Arguments:

ftr_responseResponse from any of the functions that return a response.

Returns: Response, Pretty-Printed JSON.

Description: Convert the response to a list of tuples.

Arguments:

ftr_responseResponse from any of the functions that return a response.

Returns: Response, list of tuples.

Description: Multi-Threaded Ready-Made URL-Deduplicating Web Scraper from a list of URLs.

All arguments are optional, it only needs the URL to get to work. Scraper is designed to be like a 2-Step Web Scraper, that makes a first pass collecting all URL Links and then a second pass actually fetching those URLs. Requests are processed asynchronously. This means that it doesn’t need to wait for a request to be finished to be processed.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["http://example.io"].html_tagHTML Tag to parse, string type, optional, defaults to"a"being Links, example"h1".case_insensitiveCase Insensitive,Truefor Case Insensitive, boolean type, optional, defaults toTrue, exampleTrue.deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.threadsPassingthreads = Trueuses Multi-Threading,threads = Falsewill Not use Multi-Threading, boolean type, optional, omitting it will Not use Multi-Threading.

Examples:

import faster_than_requests as requests

requests.scraper(["https://nim-lang.org", "http://example.com"], threads=True)Returns: Scraped Webs.

Description: Multi-Tag Ready-Made URL-Deduplicating Web Scraper from a list of URLs. All arguments are optional, it only needs the URL to get to work. Scraper is designed to be like a 2-Step Web Scraper, that makes a first pass collecting all URL Links and then a second pass actually fetching those URLs. Requests are processed asynchronously. This means that it doesn’t need to wait for a request to be finished to be processed. You can think of this scraper as a parallel evolution of the original scraper.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["http://example.io"].list_of_tagsList of HTML Tags to parse, List type, optional, defaults to["a"]being Links, example["h1", "h2"].case_insensitiveCase Insensitive,Truefor Case Insensitive, boolean type, optional, defaults toTrue, exampleTrue.deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.verboseVerbose, print to terminal console the progress, bool type, optional, defaults toTrue, exampleFalse.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value, example42.threadsPassingthreads = Trueuses Multi-Threading,threads = Falsewill Not use Multi-Threading, boolean type, optional, omitting it will Not use Multi-Threading.agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to5, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.

Examples:

import faster_than_requests as requests

requests.scraper2(["https://nim-lang.org", "http://example.com"], list_of_tags=["h1", "h2"], case_insensitive=False)Returns: Scraped Webs.

Description: Multi-Tag Ready-Made URL-Deduplicating Web Scraper from a list of URLs.

This Scraper is designed with lots of extra options on the arguments. All arguments are optional, it only needs the URL to get to work. Scraper is designed to be like a 2-Step Web Scraper, that makes a first pass collecting all URL Links and then a second pass actually fetching those URLs. You can think of this scraper as a parallel evolution of the original scraper.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["http://example.io"].list_of_tagsList of HTML Tags to parse, List type, optional, defaults to["a"]being Links, example["h1", "h2"].case_insensitiveCase Insensitive,Truefor Case Insensitive, boolean type, optional, defaults toTrue, exampleTrue.deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.start_withMatch at the start of the line, similar tostr().startswith(), string type, optional, example"<cite ".ends_withMatch at the end of the line, similar tostr().endswith(), string type, optional, example"</cite>".delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value, example42.line_startSlice the line at the start by this index, integer type, optional, defaults to0meaning no slicing since string start at index 0, example3cuts off 3 letters of the line at the start.line_endSlice the line at the end by this reverse index, integer type, optional, defaults to1meaning no slicing since string ends at reverse index 1, example9cuts off 9 letters of the line at the end.pre_replacementsList of tuples of strings to replace before parsing, replacements are in parallel, List type, optional, example[("old", "new"), ("red", "blue")]will replace"old"with"new"and will replace"red"with"blue".post_replacementsList of tuples of strings to replace after parsing, replacements are in parallel, List type, optional, example[("old", "new"), ("red", "blue")]will replace"old"with"new"and will replace"red"with"blue".agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to5, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.verboseVerbose, print to terminal console the progress, bool type, optional, defaults toTrue, exampleFalse.

Examples:

import faster_than_requests as requests

requests.scraper3(["https://nim-lang.org", "http://example.com"], list_of_tags=["h1", "h2"], case_insensitive=False)Returns: Scraped Webs.

Description: Images and Photos Ready-Made Web Scraper from a list of URLs.

The Images and Photos scraped from the first URL will be put into a new sub-folder named 0,

Images and Photos scraped from the second URL will be put into a new sub-folder named 1, and so on.

All arguments are optional, it only needs the URL to get to work.

You can think of this scraper as a parallel evolution of the original scraper.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["https://unsplash.com/s/photos/cat", "https://unsplash.com/s/photos/dog"].case_insensitiveCase Insensitive,Truefor Case Insensitive, boolean type, optional, defaults toTrue, exampleTrue.deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.visited_urlsDo not visit same URL twice, even if redirected into, keeps track of visited URLs, bool type, optional, defaults toTrue.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value, example42.folderDirectory to download Images and Photos, string type, optional, defaults to current folder, must not be empty string, example/tmp.force_extensionForce file extension to be this file extension, string type, optional, defaults to".jpg", must not be empty string, example".png".https_onlyForce to download images on Secure HTTPS only ignoring plain HTTP, sometimes HTTPS may redirect to HTTP, bool type, optional, defaults toFalse, exampleTrue.html_outputCollect all scraped Images and Photos into 1 HTML file with all elements scraped, bool type, optional, defaults toTrue, exampleFalse.csv_outputCollect all scraped URLs into 1 CSV file with all links scraped, bool type, optional, defaults toTrue, exampleFalse.verboseVerbose, print to terminal console the progress, bool type, optional, defaults toTrue, exampleFalse.print_altprint to terminal console thealtattribute of the Images and Photos, bool type, optional, defaults toFalse, exampleTrue.pictureScrap images from the new HTML5<picture>tags instead of<img>tags,<picture>are Responsive images for several resolutions but also you get duplicated images, bool type, optional, defaults toFalse, exampleTrue.agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to5, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.

Examples:

import faster_than_requests as requests

requests.scraper4(["https://unsplash.com/s/photos/cat", "https://unsplash.com/s/photos/dog"])Returns: None.

Description: Recursive Web Scraper to SQLite Database, you give it an URL, it gives back an SQLite.

SQLite database can be visualized with any SQLite WYSIWYG, like https://sqlitebrowser.org If the script gets interrupted like with CTRL+C it will try its best to keep data consistent. Additionally it will create a CSV file with all the scraped URLs. HTTP Headers are stored as Pretty-Printed JSON. Date and Time are stored as Unix Timestamps. All arguments are optional, it only needs the URL and SQLite file path to get to work. You can think of this scraper as a parallel evolution of the original scraper.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["https://unsplash.com/s/photos/cat", "https://unsplash.com/s/photos/dog"].sqlite_file_pathFull file path to a new SQLite Database, must be.dbfile extension, string type, required, must not be empty string, example"scraped_data.db".skip_ends_withSkip the URL if ends with this pattern, list type, optional, must not be empty list, example[".jpg", ".pdf"].case_insensitiveCase Insensitive,Truefor Case Insensitive, boolean type, optional, defaults toTrue, exampleTrue.deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.visited_urlsDo not visit same URL twice, even if redirected into, keeps track of visited URLs, bool type, optional, defaults toTrue.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value, example42.https_onlyForce to download images on Secure HTTPS only ignoring plain HTTP, sometimes HTTPS may redirect to HTTP, bool type, optional, defaults toFalse, exampleTrue.only200Only commit to Database the successful scraping pages, ignore all errors, bool type, optional, exampleTrue.agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to5, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.max_loopsMaximum total Loops to do while scraping, like a global guard for infinite redirections, integer type, optional, example999.max_deepMaximum total scraping Recursive Deep, like a global guard for infinite deep recursivity, integer type, optional, example999.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.

Examples:

import faster_than_requests as requests

requests.scraper5(["https://example.com"], "scraped_data.db")Returns: None.

Description: Regex powered Web Scraper from a list of URLs. Scrap web content using a list of Perl Compatible Regular Expressions (PCRE standard). You can configure the Regular Expressions to be case insensitive or multiline or extended.

This Scraper is designed for developers that know Regular Expressions. Learn Regular Expressions.

All arguments are optional, it only needs the URL and the Regex to get to work. You can think of this scraper as a parallel evolution of the original scraper.

Regex Arguments: (Arguments focused on Regular Expression parsing and matching)

list_of_regexList of Perl Compatible Regular Expressions (PCRE standard) to match the URL against, List type, required, example["(www|http:|https:)+[^\s]+[\w]"].case_insensitiveCase Insensitive Regular Expressions, do caseless matching,Truefor Case Insensitive, boolean type, optional, defaults toFalse, exampleTrue.multilineMulti-Line Regular Expressions,^and$match newlines within data, boolean type, optional, defaults toFalse, exampleTrue.extendedExtended Regular Expressions, ignore all whitespaces and#comments, boolean type, optional, defaults toFalse, exampleTrue.dotDot.matches anything, including new lines, boolean type, optional, defaults toFalse, exampleTrue.start_withPerl Compatible Regular Expression to match at the start of the line, similar tostr().startswith()but with Regular Expressions, string type, optional.ends_withPerl Compatible Regular Expression to match at the end of the line, similar tostr().endswith()but with Regular Expressions, string type, optional.post_replacement_regexPerl Compatible Regular Expressions (PCRE standard) to replace after parsing, string type, optional, this option works withpost_replacement_by, this is like a Regex post-processing, this option is for experts on Regular Expressions.post_replacement_bystring to replace by after parsing, string type, optional, this option works withpost_replacement_regex, this is like a Regex post-processing, this option is for experts on Regular Expressions.re_startPerl Compatible Regular Expression matchs start at this index, positive integer type, optional, defaults to0, this option is for experts on Regular Expressions.

Arguments:

list_of_urlsList of URLs, URL must be string type, required, must not be empty list, example["http://example.io"].deduplicate_urlsDeduplicatelist_of_urlsremoving repeated URLs, boolean type, optional, defaults toFalse, exampleFalse.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value, example42.agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to5, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.verboseVerbose, print to terminal console the progress, bool type, optional, defaults toTrue, exampleFalse.

Examples:

import faster_than_requests as requests

requests.scraper6(["http://nim-lang.org", "http://python.org"], ["(www|http:|https:)+[^\s]+[\w]"])Returns: Scraped Webs.

Description: CSS Selector powered Web Scraper. Scrap web content using a CSS Selector. The CSS Syntax does NOT take Regex nor Regex-like syntax nor literal tag attribute values.

All arguments are optional, it only needs the URL and CSS Selector to get to work. You can think of this scraper as a parallel evolution of the original scraper.

Arguments:

urlThe URL, string type, required, must not be empty string, example"http://python.org".css_selectorCSS Selector, string type, required, must not be empty string, example"body nav.class ul.menu > li > a".agentUser Agent, string type, optional, must not be empty string.redirectsMaximum Redirects, integer type, optional, defaults to9, must be positive integer.timeoutTimeout, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to-1, must be a positive integer value, example42.headerHTTP Header, any HTTP Headers can be put here, list type, optional, example[("key", "value")].proxy_urlHTTPS Proxy Full URL, string type, optional, must not be empty string.proxy_authHTTPS Proxy Authentication, string type, optional, defaults to"", empty string is ignored.

Examples:

import faster_than_requests as requests

requests.scraper7("http://python.org", "body > div.class a#someid")import faster_than_requests as requests

requests.scraper7("https://nim-lang.org", "a.pure-menu-link")

[

'<a class="pure-menu-link" href="/blog.html">Blog</a>',

'<a class="pure-menu-link" href="/features.html">Features</a>',

'<a class="pure-menu-link" href="/install.html">Download</a>',

'<a class="pure-menu-link" href="/learn.html">Learn</a>',

'<a class="pure-menu-link" href="/documentation.html">Documentation</a>',

'<a class="pure-menu-link" href="https://forum.nim-lang.org">Forum</a>',

'<a class="pure-menu-link" href="https://github.com/nim-lang/Nim">Source</a>'

]More examples: https://github.com/juancarlospaco/faster-than-requests/blob/master/examples/web_scraper_via_css_selectors.py

Returns: Scraped Webs.

Description: WebSocket Ping.

Arguments:

urlthe remote URL, string type, required, must not be empty string, example"ws://echo.websocket.org".datadata to send, string type, optional, can be empty string, default is empty string, example"".hangupClose the Socket without sending a close packet, optional, default isFalse, not sending close packet can be faster.

Examples:

import faster_than_requests as requests

requests.websocket_ping("ws://echo.websocket.org")Returns: Response, string type, can be empty string.

Description: WebSocket send data, binary or text.

Arguments:

urlthe remote URL, string type, required, must not be empty string, example"ws://echo.websocket.org".datadata to send, string type, optional, can be empty string, default is empty string, example"".is_textifTruedata is sent as Text else as Binary, optional, default isFalse.hangupClose the Socket without sending a close packet, optional, default isFalse, not sending close packet can be faster.

Examples:

import faster_than_requests as requests

requests.websocket_send("ws://echo.websocket.org", "data here")Returns: Response, string type.

Description: Takes an URL string, makes an HTTP GET and returns a string with the response Body.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://archlinux.org.

Examples:

import faster_than_requests as requests

requests.get2str("http://example.com")Returns: Response body, string type, can be empty string.

Description:

Takes a list of URLs, makes 1 HTTP GET for each URL, and returns a list of strings with the response Body.

This makes all GET fully parallel, in a single Thread, in a single Process.

Arguments:

list_of_urlsA list of the remote URLs, list type, required. Objects inside the list must be string type.

Examples:

import faster_than_requests as requests

requests.get2str2(["http://example.com/foo", "http://example.com/bar"]) # Parallel GETReturns:

List of response bodies, list type, values of the list are string type,

values of the list can be empty string, can be empty list.

Description: Takes an URL, makes an HTTP GET, returns a dict with the response Body.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://alpinelinux.org.

Examples:

import faster_than_requests as requests

requests.get2dict("http://example.com")Returns:

Response, dict type, values of the dict are string type,

values of the dict can be empty string, but keys are always consistent.

Description: Takes an URL, makes an HTTP GET, returns a Minified Computer-friendly single-line JSON with the response Body.

Arguments:

urlthe remote URL, string type, required, must not be empty string, examplehttps://alpinelinux.org.pretty_printPretty Printed JSON, optional, defaults toFalse.

Examples:

import faster_than_requests as requests

requests.get2json("http://example.com", pretty_print=True)Returns: Response Body, Pretty-Printed JSON.

Description: Takes an URL, makes an HTTP POST, returns the response Body as string type.

Arguments:

urlthe remote URL, string type, required, must not be empty string.bodythe Body data, string type, required, can be empty string.multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].

Examples:

import faster_than_requests as requests

requests.post2str("http://example.com/api/foo", "My Body Data Here")Returns: Response body, string type, can be empty string.

Description: Takes an URL, makes a HTTP POST on that URL, returns a dict with the response.

Arguments:

urlthe remote URL, string type, required, must not be empty string.bodythe Body data, string type, required, can be empty string.multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].

Examples:

import faster_than_requests as requests

requests.post2dict("http://example.com/api/foo", "My Body Data Here")Returns:

Response, dict type, values of the dict are string type,

values of the dict can be empty string, but keys are always consistent.

Description: Takes a list of URLs, makes 1 HTTP GET for each URL, returns a list of responses.

Arguments:

urlthe remote URL, string type, required, must not be empty string.bodythe Body data, string type, required, can be empty string.multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].pretty_printPretty Printed JSON, optional, defaults toFalse.

Examples:

import faster_than_requests as requests

requests.post2json("http://example.com/api/foo", "My Body Data Here")Returns: Response, string type.

Description: Takes a list of URLs, makes 1 HTTP POST for each URL, returns a list of responses.

Arguments:

list_of_urlsthe remote URLS, list type, required, the objects inside the list must be string type.bodythe Body data, string type, required, can be empty string.multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].

Examples:

import faster_than_requests as requests

requests.post2list("http://example.com/api/foo", "My Body Data Here")Returns:

List of response bodies, list type, values of the list are string type,

values of the list can be empty string, can be empty list.

Description: Takes a list of URLs, makes 1 HTTP GET for each URL, returns a list of responses.

Arguments:

urlthe remote URL, string type, required, must not be empty string.filenamethe local filename, string type, required, must not be empty string, full path recommended, can be relative path, includes file extension.

Examples:

import faster_than_requests as requests

requests.download("http://example.com/api/foo", "my_file.ext")Returns: None.

Description: Takes a list of URLs, makes 1 HTTP GET Download for each URL of the list.

Arguments:

list_of_fileslist of tuples, tuples must be 2 items long, first item is URL and second item is filename. The remote URL, string type, required, must not be empty string, is the first item on the tuple. The local filename, string type, required, must not be empty string, can be full path, can be relative path, must include file extension.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value.threadsPassingthreads = Trueuses Multi-Threading,threads = Falsewill Not use Multi-Threading, omitting it will Not use Multi-Threading.

Examples:

import faster_than_requests as requests

requests.download2([("http://example.com/cat.jpg", "kitten.jpg"), ("http://example.com/dog.jpg", "doge.jpg")])Returns: None.

Description:

Takes a list of URLs, makes 1 HTTP GET Download for each URL of the list.

It will Retry again and again in loop until the file is downloaded or tries is 0, whatever happens first.

If all retries have failed and tries is 0 it will error out.

Arguments:

list_of_fileslist of tuples, tuples must be 2 items long, first item is URL and second item is filename. The remote URL, string type, required, must not be empty string, is the first item on the tuple. The local filename, string type, required, must not be empty string, can be full path, can be relative path, must include file extension.delayDelay between a download and the next one, MicroSeconds precision (1000 = 1 Second), integer type, optional, defaults to0, must be a positive integer value.trieshow many Retries to try, positive integer type, optional, defaults to9, must be a positive integer value.backoffBack-Off between retries, positive integer type, optional, defaults to2, must be a positive integer value.jitterJitter applied to the Back-Off between retries (Modulo math operation), positive integer type, optional, defaults to2, must be a positive integer value.verbosebe Verbose, bool type, optional, defaults toTrue.

Returns: None.

Examples:

import faster_than_requests as requests

requests.download3(

[("http://INVALID/cat.jpg", "kitten.jpg"), ("http://INVALID/dog.jpg", "doge.jpg")],

delay = 1, tries = 9, backoff = 2, jitter = 2, verbose = True,

)Examples of Failed download output (intended):

$ python3 example_fail_all_retry.py

Retry: 3 of 3

(url: "http://NONEXISTENT", filename: "a.json")

No such file or directory

Additional info: "Name or service not known"

Retrying in 64 microseconds...

Retry: 2 of 3

(url: "http://NONEXISTENT", filename: "a.json")

No such file or directory

Additional info: "Name or service not known"

Retrying in 128 microseconds (Warning: This is the last Retry!).

Retry: 1 of 3

(url: "http://NONEXISTENT", filename: "a.json")

No such file or directory

Additional info: "Name or service not known"

Retrying in 256 microseconds (Warning: This is the last Retry!).

Traceback (most recent call last):

File "example_fail_all_retry.py", line 3, in <module>

downloader.download3()

...

$Description:

Set the HTTP Headers from the arguments.

This is for the functions that NOT allow http_headers as argument.

Arguments:

http_headersHTTP Headers, List of Tuples type, required, example[("key", "value")], example[("DNT", "1")]. List of tuples, tuples must be 2 items long, must not be empty list, must not be empty tuple, the first item of the tuple is the key and second item of the tuple is value, keys must not be empty string, values can be empty string, both must the stripped.

Examples:

import faster_than_requests as requests

requests.set_headers(headers = [("key", "value")])import faster_than_requests as requests

requests.set_headers([("key0", "value0"), ("key1", "value1")])import faster_than_requests as requests

requests.set_headers([("content-type", "text/plain"), ("dnt", "1")])Returns: None.

Description:

Takes MultiPart Data and returns a string representation. Converts MultipartData to 1 human readable string.

The human-friendly representation is not machine-friendly, so is not Serialization nor Stringification, just for humans.

It is faster and different than stdlib parse_multipart.

Arguments:

multipart_dataMultiPart data, optional, list of tupes type, must not be empty list, example[("key", "value")].

Examples:

import faster_than_requests as requests

requests.multipartdata2str([("key", "value")])Returns: string.

Description:

Takes data and returns a standard Base64 Data URI (RFC-2397).

At the time of writing Python stdlib does not have a function that returns Data URI (RFC-2397) on base64 module.

This can be used as URL on HTML/CSS/JS. It is faster and different than stdlib base64.

Arguments:

dataArbitrary Data, string type, required.mimeMIME Type ofdata, string type, required, example"text/plain".encodingEncoding, string type, required, defaults to"utf-8", example"utf-8","utf-8"is recommended.

Examples:

import faster_than_requests as requests

requests.datauri("Nim", "text/plain")Returns: string.

Description:

Parse any URL and return parsed primitive values like

scheme, username, password, hostname, port, path, query, anchor, opaque, etc.

It is faster and different than stdlib urlparse.

Arguments:

urlThe URL, string type, required.

Examples:

import faster_than_requests as requests

requests.urlparse("https://nim-lang.org")Returns: scheme, username, password, hostname, port, path, query, anchor, opaque, etc.

Description:

Encodes a URL according to RFC-3986, string to string.

It is faster and different than stdlib urlencode.

Arguments:

urlThe URL, string type, required.use_plusWhenuse_plusistrue, spaces are encoded as+instead of%20.

Examples:

import faster_than_requests as requests

requests.urlparse("https://nim-lang.org", use_plus = True)Returns: string.

Description:

Decodes a URL according to RFC-3986, string to string.

It is faster and different than stdlib unquote.

Arguments:

urlThe URL, string type, required.use_plusWhenuse_plusistrue, spaces are decoded as+instead of%20.

Examples:

import faster_than_requests as requests

requests.urldecode(r"https%3A%2F%2Fnim-lang.org", use_plus = False)Returns: string.

Description:

Encode a URL according to RFC-3986, string to string.

It is faster and different than stdlib quote_plus.

Arguments:

queryList of Tuples, required, example[("key", "value")], example[("DNT", "1")].omit_eqIf the value is an empty string then the=""is omitted, unlessomit_eqisfalse.use_plusWhenuse_plusistrue, spaces are decoded as+instead of%20.

Examples:

import faster_than_requests as requests

requests.encodequery([("key", "value")], use_plus = True, omit_eq = True)Returns: string.

Description:

Convert the characters &, <, >, " in a string to an HTML-safe string, output is Valid XML.

Use this if you need to display text that might contain such characters in HTML, SVG or XML.

It is faster and different than stdlib html.escape.

Arguments:

sArbitrary string, required.

Examples:

import faster_than_requests as requests

requests.encodexml("<h1>Hello World</h1>")Returns: string.

Description: Fast HTML and SVG Minifier. Not Obfuscator.

Arguments:

htmlHTML string, required.

Examples:

import faster_than_requests as requests

requests.minifyhtml("<h1>Hello</h1> <h1>World</h1>")Returns: string.

Description: Helper for HTTP Authentication headers.

Returns 1 string kinda like "Basic base64(username):base64(username)",

so it can be used like [ ("Authorization": gen_auth_header("username", "password") ) ].

See juancarlospaco#168 (comment)

Arguments:

usernameUsername string, must not be empty string, required.passwordPassword string, must not be empty string, required.

Returns: string.

Examples:

import faster_than_requests as requests

requests.debugs()Arguments: None.

Returns: None.

Description: This module uses compile-time deterministic memory management GC (kinda like Rust, but for Python). Python at run-time makes a pause, runs a Garbage Collector, and resumes again after the pause.

gctricks.optimizeGC allows you to omit the Python GC pauses at run-time temporarily on a context manager block,

this is the proper way to use this module for Benchmarks!, this is optional but recommended,

we did not invent this, this is inspired from work from Instagram Engineering team and battle tested by them:

This is NOT a function, it is a context manager, it takes no arguments and wont return.

This calls init_client() at start and close_client() at end automatically.

Examples:

from gctricks import optmizeGC

with optmizeGC:

# All your HTTP code here. Chill the GC. Calls init_client() and close_client() automatically.

# GC run-time pauses enabled again.Description: Instantiate the HTTP Client object, for deferred initialization, call it before the start of all HTTP operations.

get(), post(), put(), patch(), delete(), head() do NOT need this, because they auto-init,

this exist for performance reasons to defer the initialization and was requested by the community.

This is optional but recommended.

Read optimizeGC documentation before using.

Arguments: None.

Examples:

import faster_than_requests as requests

requests.init_client()

# All your HTTP code here.Returns: None.

Description: Tear down the HTTP Client object, for deferred de-initialization, call it after the end of all HTTP operations.

get(), post(), put(), patch(), delete(), head() do NOT need this, because they auto-init,

this exist for performance reasons to defer the de-initialization and was requested by the community.

This is optional but recommended.

Read optimizeGC documentation before using.

Arguments: None.

Examples:

import faster_than_requests as requests

# All your HTTP code here.

requests.close_client()Returns: None.

For more Examples check the Examples and Tests.

Instead of having a pair of functions with a lot of arguments that you should provide to make it work, we have tiny functions with very few arguments that do one thing and do it as fast as possible.

A lot of functions are oriented to Data Science, Big Data, Open Data, Web Scrapping, working with HTTP REST JSON APIs.

pip install faster_than_requests

- Make a quick test drive on Docker!.

$ ./build-docker.sh

$ ./run-docker.sh

$ ./server4benchmarks & # Inside Docker.

$ python3 benchmark.py # Inside Docker.- None

- ✅ Linux

- ✅ Windows

- ✅ Mac

- ✅ Android

- ✅ Raspberry Pi

- ✅ BSD

More Faster Libraries...

- https://github.com/juancarlospaco/faster-than-csv#faster-than-csv

- https://github.com/juancarlospaco/faster-than-walk#faster-than-walk

- We want to make Open Source faster, better, stronger.

- Python 3.

- 64 Bit.

- Documentation assumes experience with Git, GitHub, cmd, Compiled software, PC with Administrator.

- If installation fails on Windows, just use the Source Code:

The only software needed is Git for Windows and Nim.

Reboot after install. Administrator required for install. Everything must be 64Bit.

If that fails too, dont waste time and go directly for Docker for Windows..

For info about how to install Git for Windows, read Git for Windows Documentation.

For info about how to install Nim, read Nim Documentation.

For info about how to install Docker for Windows., read Docker for Windows. Documentation.

GitHub Actions Build everything from zero on each push, use it as guidance too.

- Git Clone and Compile on Windows 10 on just 2 commands!.

- Alternatively you can try Docker for Windows.

- Alternatively you can try WSL for Windows.

- The file extension must be

.pyd, NOT.dll. Compile with-d:sslto use HTTPS.

nimble install nimpy

nim c -d:ssl -d:danger --app:lib --out:faster_than_requests.pyd faster_than_requests.nim

- Become a Sponsor and help improve this library with the features you want!, we need Sponsors!.

Bitcoin BTC

BEP20 Binance Smart Chain Network BSC

0xb78c4cf63274bb22f83481986157d234105ac17e

BTC Bitcoin Network

1Pnf45MgGgY32X4KDNJbutnpx96E4FxqVi

Ethereum ETH Dai DAI Uniswap UNI Axie Infinity AXS Smooth Love Potion SLP

BEP20 Binance Smart Chain Network BSC

0xb78c4cf63274bb22f83481986157d234105ac17e

ERC20 Ethereum Network

0xb78c4cf63274bb22f83481986157d234105ac17e

Tether USDT

BEP20 Binance Smart Chain Network BSC

0xb78c4cf63274bb22f83481986157d234105ac17e

ERC20 Ethereum Network

0xb78c4cf63274bb22f83481986157d234105ac17e

TRC20 Tron Network

TWGft53WgWvH2mnqR8ZUXq1GD8M4gZ4Yfu

Solana SOL

BEP20 Binance Smart Chain Network BSC

0xb78c4cf63274bb22f83481986157d234105ac17e

SOL Solana Network

FKaPSd8kTUpH7Q76d77toy1jjPGpZSxR4xbhQHyCMSGq

Cardano ADA

BEP20 Binance Smart Chain Network BSC

0xb78c4cf63274bb22f83481986157d234105ac17e

ADA Cardano Network

DdzFFzCqrht9Y1r4Yx7ouqG9yJNWeXFt69xavLdaeXdu4cQi2yXgNWagzh52o9k9YRh3ussHnBnDrg7v7W2hSXWXfBhbo2ooUKRFMieM

Sandbox SAND Decentraland MANA

ERC20 Ethereum Network

0xb78c4cf63274bb22f83481986157d234105ac17e

Algorand ALGO

ALGO Algorand Network

WM54DHVZQIQDVTHMPOH6FEZ4U2AU3OBPGAFTHSCYWMFE7ETKCUUOYAW24Q

- Whats the idea, inspiration, reason, etc ?.

- This works with SSL ?.

Yes.

- This works without SSL ?.

Yes.

- This requires Cython ?.

No.

- This runs on PyPy ?.

No.

- This runs on Python2 ?.

I dunno. (Not supported)

- This runs on 32Bit ?.

No.

- This runs with Clang ?.

No.

- Where to get help ?.

https://github.com/juancarlospaco/faster-than-requests/issues

- How to set the URL ?.

url="http://example.com" (1st argument always).

- How to set the HTTP Body ?.

body="my body"

- How to set an HTTP Header key=value ?.

- How can be faster than PyCurl ?.

I dunno.

- Why use Tuple instead of Dict for HTTP Headers ?.

For speed performance reasons, dict is slower, bigger, heavier and mutable compared to tuple.

- Why needs 64Bit ?.

Maybe it works on 32Bit, but is not supported, integer sizes are too small, and performance can be worse.

- Why needs Python 3 ?.

Maybe it works on Python 2, but is not supported, and performance can be worse, we suggest to migrate to Python3.

- Can I wrap the functions on a

try: except:block ?.

Functions do not have internal try: except: blocks,

so you can wrap them inside try: except: blocks if you need very resilient code.

- PIP fails to install or fails build the wheel ?.

Add at the end of the PIP install command:

--isolated --disable-pip-version-check --no-cache-dir --no-binary :all:

Not my Bug.

- How to Build the project ?.

build.sh or build.nims

- How to Package the project ?.

package.sh or package.nims

- This requires Nimble ?.

No.

- Whats the unit of measurement for speed ?.

Unmmodified raw output of Python timeit module.

Please send Pull Request to Python to improve the output of timeit.

- The LoC is a lie, not counting the lines of code of the Compiler ?.

Projects that use Cython wont count the whole Cython on the LoC, so we wont neither.

⭐ @juancarlospaco

⭐ @nikitavoloboev

⭐ @5u4

⭐ @CKristensen

⭐ @Lips7

⭐ @zeandrade

⭐ @SoloDevOG

⭐ @AM-I-Human

⭐ @pauldevos

⭐ @divanovGH

⭐ @ali-sajjad-rizavi

⭐ @George2901

⭐ @jeaps17

⭐ @TeeWallz